Scribe notes by Hamza Chaudhry and Zhaolin Ren

Previous post: Natural Language Processing – guest lecture by Sasha Rush Next post: TBD. See also all seminar posts and course webpage.

See also video of lecture. Lecture slides: Original form: main / bandit analysis. Annotated: main / bandit analysis.

Sham Kakade is a professor in the Department of Computer Science and the Department of Statistics at the University of Washington, as well as a senior principal researcher at Microsoft Research New York City. He works on the mathematical foundations of machine learning and AI. He is the recipient of the several awards, including the ICML Test of Time Award (2020), the IBM Pat Goldberg best paper award (in 2007), and the INFORMS Revenue Management and Pricing Prize (2014).

Sham is writing a book on the theory of reinforcement learning with Agarwal, Jiang and Sun.

Introduction:

Reinforcement learning has found success in a great number of fields because it is a very “natural framework” for interactive learning. It is based around the notion of experimenting with different behaviors in one’s environment and learning from mistakes to identify the optimal strategy. However, there is a lack of understanding regarding how to best optimize reinforcement learning algorithms when there is uncertainty about the agent’s environment and potential rewards. Therefore, it is important to develop a theoretical foundation about this to study generalization in reinforcement learning. The primary question these notes will address is as follows:

What are necessary representational and distributional conditions that enable provably sample-efficient reinforcement learning?

We will answer this question in the following parts.

- Part I: Bandits & Linear Bandits “Bandit problems” correspond to RL where the environment is reset in each step (horizon H=1). This captures the aspect of having an unknown reward function of RL, but does not capture the aspect of a changing environment based on agent’s actions. This part will be based on the papers Dani-Hayes-Kakade 08 and Srinivas-Kakade-Krause-Seeger 10

- Part II: Lower Bounds RL is very much not a solved problem in neither theory nor practice. Even the RL analog of linear regression, when the expected reward is a linear function of the actions, is not solved. We will see that this is for a good reason: there is an exponential lower bound on the number of steps it takes to find a nearly-optimal policy in this case. This part is based on the recent paper Weisz-Amortila-Szepesvári 20 and the follow-up Wang-Wang-Kakade 21

- Interlude: Do these lower bounds matter in practice?

- Part III: Upper Bounds Given the lower bound, we see that to get positive results (aka upper bounds on the number of steps) we need to make strong assumptions on the structure of reqards. There have been a number of incomparable such assumptions used, and we will see that there is a way to unify them. This part is based on the recent paper Du-Kakade-Lee-Lovett-Mahajan-Sun-Wang 21

Before all of these parts, we will start by introducing the general framework of Markov Decision Processes (MDPs) and do a quick tour of generalization for static learning and RL.

Markov Decision Processes: A Framework for Reinforcement Learning

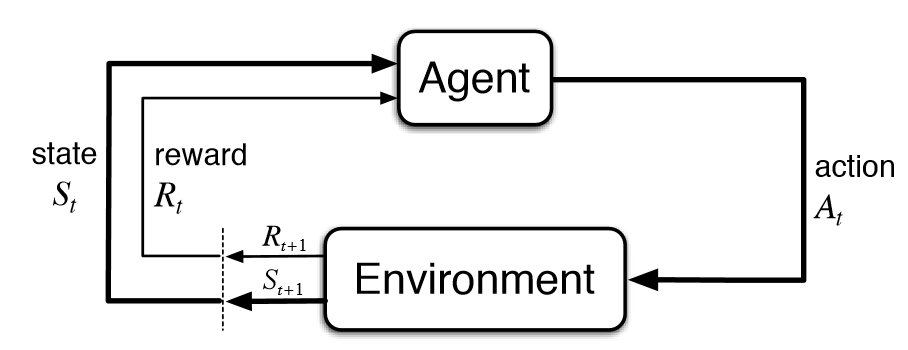

We have an agent in an environment at state that takes some action

which will observe some reward

and update the environment to state

.

The following are some key terms that we will need throughout the rest of the notes:

- State Space, Action Space, Policy: We denote the state space as

, and the action space as

. A policy

is a mapping from states to actions:

- Trajectory: The sequence of states, actions, rewards an agent sees for a horizon of

timesteps.

- State Value at time

: The expected cumulative reward starting from state

and using policy

afterwards.

- State Action Value at time

: The expected cumulative reward given a state-action tuple

starting from time

and using policy

afterwards.

- Optimal value and state-value function: we define an optimal policy by

, and the associated optimal

-function and value function by

and

respectively (or equivalently,

,

). Note that

and

can be defined via the Bellman optimality equation as follows:

where we additionally define for all

.

- Goal: To find a policy

that maximizes the cumulative H-step reward

starting from an initial state

with a horizon

. In the episodic setting, one starts at state

, acts for

steps, and then repeats.

Challenges in Reinforcement Learning

There are three main challenges that we face in reinforcement learning

- Exploration: The total size and states of the environment may be unknown.

- Credit Assignment: We need to assign rewards to actions even if the rewards are delayed.

- Large State/Action Spaces: We face the curse of dimensionality.

We will deal with these problems by framing them in terms of generalization.

Part 0: A Whirlwind Tour of Generalization

Provable Generalization in Supervised Learning

As we have seen in the first lecture of this course, generalization is possible in the supervised learning setting, when the data follows an i.i.d distribution.

Specifically we have the following bound

Occam’s Razor Bound (Finite Hypothesis Class): To learn a policy that is

close to the best policy in a hypothesis class

, we need a number of samples that is

.

This means we can try lots of things on our data to see which hypotheses are -best. To handle infinite hypothesis classes, we can replace

with various other “complexity measures” to obtain generalization bounds such as:

- VC Dimension:

- Classification (Margin Bounds):

- Linear Regression:

- Deep Learning: Algorithm also determines the complexity control

Another way to say this is that in all of these cases, we can bound the generalization gap by a quantity of the form

where

is some “complexity measure” of the class

and

is the number of samples.

One reference for these generalization results in the supervised learning setting is the following book by Sanjeev Arora and collaborators.

The key enabler of generalization in supervised learning is data reuse. For a given training set, we can in principle simultaneously evaluate the loss of all hypotheses in our class. For example, given the fixed ImageNet dataset, we can evaluate performance on any classifier. As we will see, this is not a property that will always hold in RL (when it does hold, sample-efficient generalization is likely to follow).

Sample Efficient RL in the Tabular Case with few states and actions (No Generalization involved):

Consider a tabular MDP setting where ,

and

denote the number of states, number of actions and length of the horizon respectively. Suppose we are operating in a setup where

and

are both small. Suppose also that the MDP is unknown.

Our goal in such a setting is to find a -optimal policy

such that

, where

is the initial state (for concreteness let’s assume it is deterministic), and

is truly an optimal policy for this MDP. Since we assume the number of states and actions to be small, it is possible to explore the entire world, and finding such an

-optimal policy is in principle possible. We thus do not have to consider any hypothesis class here (so no generalization involved), and can instead seek to be optimal under all possible mappings from states to actions.

Think for example of the following maze MDP, where the state of the world is the cell the agent is in and the action it can take at each state is a move to each of say 4 neighboring cells. Then, if we are able to get to every state and try every action there, we would have learned the world.

In this particular scenario, randomly exploring the world will allow us to learn the world. However, if we consider a modified random exploration strategy, where the probability of going left is significantly larger (say 5 times larger) than the probability of going right, then it will take exponential time to hit the goal state. In general, even for MDPs with small state and action spaces, a purely random exploration approach may be insufficient, as we may not be exploring the world enough. What alternative approach might we then adopt in order to achieve a sample-efficient learning algorithm?

Theorem: (Kearns & Singh ’98). In the episodic setting,

samples suffice to find an

opt policy, where

is the number of states,

is the number of actions, and

is the length of the horizon.

The above breakthrough result was the first to demonstrate that learning an -opt policy is possible using just polynomially many samples. The key idea behind this is optimism and dynamic programming. In proving the result, the authors designed an algorithm called the E

algorithm (Explicit Explore or Exploit). The E

algorithm adopts a model-based approach, and relies on a “plan-to-explore” mechanism. As we act randomly, we will learn some part of the state space, and having learned this region well, we can thus accurately plan to escape it. This is where optimism comes in, since we give ourselves a bonus for escaping a region we know well.

Based on the Kearns and Singh result, there has been a number of followup works on the tabular MDP setting. One line of work seeks to improve on the precise factors in the sample complexity.

Improvements on the sample complexity:

- A General Polynomial Time Algorithm for Near-Optimal Reinforcement Learning / Brafman-Tennenholtz 2002

- On the Sample Complexity of Reinforcement Learning – Kakade 2003 (PhD Thesis).

- Near-Optimal Regret Bounds for Reinforcement Learning – Jaksch, Ortner, Auer 2010

- Posterior sampling for reinforcement learning: worst-case regret bounds – Agrawal Jia 2017

Another line of work seeks to show that Q-learning, a model-free approach, can also achieve similar polynomial complexity, if an appropriate optimism bonus is incorporated.

Provable Q-Learning (+Bonus)

- PAC Model-Free Reinforcement Learning – Strehl-Li-Wiewiora-Langford-Littman 2006

- Algorithms for Reinforcement Learning – lecture by Szepesv´ari 2009

- Is Q-learning Provably Efficient? – Jin Allen-ZhuBubeck Jordan 2018

As the range and technical depth of the above results demonstrate, even in the relatively simple tabular case, the problem is already challenging, and a precise sharp characterization of sample complexity is even more difficult. The chief source of difficulty is the unknown nature of the world (if the world was known, then we can just run dynamic programming).

Provable Generalization in RL:

Ultimately, we want to move beyond small tabular MDPs, where a polynomial dependence in the sample complexity on is acceptable, and achieve sample-efficient learning in big problems where the space space could be massive. Think for instance of the game of Go.

In such a setting, requiring polynomially (in ) many samples is clearly unacceptable. This gives rise to the following question.

Question 1: Can we find an opt policy with no

dependence?

In order to do so, it is necessary to reutilize data in some way since we will not be able to see all the possible states in the world. How then might we reuse data to estimate the value of all policies in a policy class ? A naive approach is the following:

- Idea: Trajectory tree algorithm

- Dataset Collection: Choose actions uniformly at random for all H steps in an episode.

- Estimation: Uses importance sampling to evaluate every

.

Theorem: (Kearns, Mansour, & Ng ’00) To find an

best in class policy, the trajectory tree algorithm uses

samples.

Observe that when (i.e. a contextual bandit) this is exactly the kind of generalization bound we saw in the Occam Razor’s bound for supervised learning. Since there may be stochasticity in the MDP, such that

could be infinite or even uncountable, this dependence on

is a genuine improvement on the results for the tabular MDP setting which depended polynomially on

. In this sense, this really is a generalization result, since we are learning an

-best in class policy without having seen the entire world (i.e. all the states in the world).

We note that the result only has dependence on hypothesis class size and similar to the supervised learning setting, there are VC analogues as well. However, we can not avoid the

dependence to find an

best-in-class policy agnostically (without assumptions on the MDP). To see why, consider a binary tree with

-policies and a sparse reward at a leaf node.

This dependence, while unavoidable without further assumptions, is clearly undesirable. This brings us to the following question.

Question 2: Can we find an opt policy with no

dependence and

samples?

As we just saw, agnostically we cannot learn an -best-in-class policy without an

dependence. However, as we will see it is possible when appropriate assumptions are made. But what is the nature of the assumptions under which this kind of sample-efficient RL generalization is possible (when there is no (or mild) dependence on

and

)? What assumptions are necessary? What assumptions are sufficient? We will seek to address these questions.

To do so, we start simple, and first look at the bandits and linear bandits problem, where the horizon is just 1. Note that this is still an interactive learning problem, just that we reset the episode after one time-step, and that it is an example of a problem with a potentially large action space.

Part 1: Bandits and Linear Bandits

Multi-Armed Bandits:

The multi-armed bandits algorithm is intimately interwoven with the theory of reinforcement learning. It is based around the question of how to allocate T tokens to A “arms” to maximize one’s return:

- Some Aspects of the Sequential Design of Experiments – Robbins 1952

- Bandit Processes and Dynamic Allocations Indices – Gittins 1979

- Asymptotically Efficient Adaptive Allocation Rules – Lai and Robbins 1985

It is a very successful algorithm when is small. What can we do when

is large?

Large-Action Case:

The bandits have to make a decision regarding which arm to pull. There is a widely used linear formulation of this problem that will assist us in understanding generalization. The linear bandit model is successful in many applications (scheduling, ads, etc.)

Linear (RKHS) Bandits:

- Decision:

; Reward:

; Reward model:

- The hypothesis class

is a set of linear/RKHS functions (an overview of RKHS, which stands for Reproducing Kernel Hilbert Space, can be found here).

Linear/Gaussian Process Upper Confidence Bounds (UCB):

The principle underlying the Linear Bandits algorithm is optimism in the face of uncertainty:

Pick an input that maximizes the upper confidence bound:

Note that is the best estimate of the ground-truth and

is a standard deviation that we have to estimate. In choosing the term

, we have to navigate a trade-off between exploration and exploitation. As we can see, this algorithm will only pick plausible maximizers.

Regret of Linear-UCB / Gaussian Process-UCB (Generalization in Action Space)

Theorem: (Dani, Hayes, & K. ’08), (Srinivas, Krause, K., & Seeger ’10). Assuming

is an RKHS (with bounded norm), if we choose

“correctly”, then the regret satisfies

wherehides logarithmic terms, and

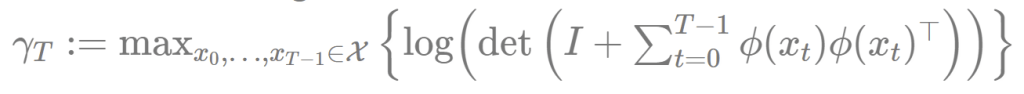

The key complexity concept here is “Maximum Information Gain”: , which one can think of as the “effective dimension,” determines the regret because

for

in

. Here are some relevant papers for further understanding regret, which is the difference between the reward of a possible action and the reward of an action that has been taken.

- Finite-time Analysis of the Multiarmed Bandit Problem – Auer Cesa-Bianchi Fischer 2002

- Improved Algorithms for Linear Stochastic Bandits – Abbasi-yadkori, Pál, Szepesvári 2011

Linear Upper Confidence Bound Analysis

Handling Large Action Spaces

On each round, we must choose a decision . This yields a reward

, where

Above, is an unknown weight vector and

may be replaced by

if we have access to such a representation. Note that this tells us that the conditional expectation of

upon

is linear. We have the a corresponding i.i.d. noise sequence

. If

are our decisions, then our cumulative regret in expectation is

where is an optimal decision for

, i.e.

LinUCB and the Confidence Ball

After t rounds, we can define our uncertainty region with center

and shape

using the

regularized least squares solution:

is a parameter of the algorithm and

determines how accurately we know

The LinUCB Algorithm can be understood as follows: For

- Execute

- Observe the reward

and update

LinUCB Regret Bound

As the following theorem shows, the regret is sublinear with polynomial dependence on

and no dependence on the cardinality

of the decision space

.

Theorem (regret): (Dani, Hayes, Kakade 2009). Suppose we have bounded noise

;

;

, for

. Set

Then, with probability greater than

, for all

,

whereare absolute constants.

To prove the regret theorem above, we will require the following two lemmas.

Lemma 1 (Confidence): Let

. We have that

Lemma 2 (Sum of Squares Regret Bound): Define

. Suppose

is increasing and that for all

, we have

. Then,

We note that Lemma 2 actually depends on Lemma 1, since it assumes that for each

, a property that Lemma 1 tells us happens with probability at least

. We defer the proofs of the two lemmas to later, and first show why they can be used to prove the regret theorem.

Proof of regret theorem: Using the two lemmas above along with the Cauchy-Schwarz inequality, we have with probability at least that

The rest of the proof follows from our chosen value of .

We now proceed to sketch out the proofs of Lemma 1 (confidence bound) and Lemma 2 (sum of squares regret bound). We begin with showing why Lemma 2 holds.

Analysis and proof of Lemma 2 (sum of squares regret bound)

Our first auxilliary result bounds the pointwise width of the confidence ball.

Lemma (pointwise width of confidence ball). Let

. Consider any

. Then,

Proof. We have

where the first inequality follows from Cauchy-Schwarx and the second (i.e last) inequality holds by the definition of and our assumption that

Let us now define

which we can think of as the “normalized width” at time in the direction of our decision. We have the following bound on the instantaneous regret

.

Lemma (instantaneous regret lemma). Fix

. If

, then

Proof. The basic idea is to use “optimism”. Let denote the vector maximizing the dot product

. By choice of

,

, where the inequality used the hypothesis that

. This manifestation of “optimism” is crucial, since it tells us that the “ideal” reward we think we can get at time

exceeds the optimal expected reward

. Hence,

where the last step follows from the pointwise width lemma (note is in

and

is assumed to be

by the hypothesis in Lemma 2). Since in the linear bandits setup we assumed that

for all

, the simple bound

holds as well. We may also assume for simplicity that

. This then yields the bound in the result.

In the next two lemmas, we use a geometric potential function argument to bound the sum of widths independently of the choices made by the algorithm (e.g. choice of and

sequence).

Geometric Lemma 1. We have

Proof. By definition of , we have

We complete the proof by noting that .

Geometric Lemma 2. For any sequence

such that for

,

, we have

Proof. Denote the eigenvalues of as

, and note

Using the AM-GM inequality,

We are now finally ready to prove Lemma 2 (sum of squares regret bound).

Proof of Lemma 2 (sum of squares regret bound).

Assume for all

. We have

where the first inequality follows from the instantaneous regret lemma, the second from that is an increasing function of

, the third uses the fact that for

, the final equality holds by Geometric Lemma 1, and the final inequality follows from Geometric Lemma 2.

This wraps up our discussion of Lemma 2.

Analysis of Lemma 1 (Confidence bound)

Recall that our goal here is to show that with high probability. We begin with the following result, which is a general version of the self-normalized sum argument in Dani, Hayes, Kakade 2009.

Lemma (Self-normalized bound for vector-valued Martingalues, Abbasi et al. 2011). Suppose

are mean zero random variables (can be generalized to martingalues), and

is bounded by

. Let

be a stochastic process. Define

. With probability at least

, we have for all

,

Equipped with the lemma above, we are now ready to prove Lemma 1, which we will restate here again.

Lemma 1 (Confidence): Let

. We have that

Proof of Lemma 1. Since , we have

To get the last equality, we recall that . By the triangle inequality, it follows that we have

where the last inequality holds with probability at least for every

, using the self-normalized bound above, as well as the fact that

. Since

for any vectors

, it follows that with probability at least

, for every

,

where the final inequality is a consequence of the choice in the algorithm (where we recall the upper bound

), as well as Geometric Lemma 2. The result then follows by our choice of

, which is

where is an absolute constant (note in doing so we also subsumed the

term, simplifying the exposition).

We move on now to more challenging RL problems where the horizon is larger than 1, and explore lower bounds in this regime.

Part 2: What are necessary assumptions for generalization in RL?

Approximate dynamic programming with linear function approximation

We begin by considering generalization with a very natural assumption: suppose that the value function can be approximated by linear basis functions

We assume that the dimension of the representation, , is low compared the state and action dimensions. The idea of using a linear function approximation in RL and dynamic programming is not new, and had been explored in early works by Shannon (“Programming a digital computer for playing chess.”, Philosophical Magazine, 1950) as well as Bellman and Dreyfus (“Functional approximations and dynamic programming”, 1959). There has also since been significant work on this approach, see e.g. Tesauro 1995, de Farias and Van Roy 2003, Wen and Van Roy 2013.

One natural question that arises is this: what conditions must the representation satisfy in order for this approach to work?

We proceed by studying the simplest possible case: assuming that the optimal -function

is linearly realizable.

RL with linearly realizable  function approximation: does there exist a sample efficient algorithm?

function approximation: does there exist a sample efficient algorithm?

Suppose we have access to a feature map . Concretely, the assumption we consider is the following:

Assumption 1 (Linearly realizable

): Assume for all

that there exists

such that

As an aside, with Assumption 1, we can consider the problem from a linear programming viewpoint. Note that:

- We have an underlying LP with

variables and

constraints.

- The LP is specific to the dynamic programming problem at hand (and hence not general) because it encodes the Bellman optimality constraints.

- We have sampling access (in the episodic setting).

It may be tempting to think that Assumption 1 is sufficient to enable a sample-efficient algorithm for RL (if we assume we already know the representation ). However, that is not true, as the following theorem from a very recent work demonstrates:

Theorem 1 (Weisz, Amortila, Szepesvári 2021): There exists an MDP and a representation

satisfying Assumption 1, such that any online RL algorithm (with knowledge of

) requires

samples to output the value

up to constant additive error, with probability at least 0.9.

While linear realizability alone is insufficient for sample efficiency in online RL, one might consider imposing further assumptions that could suffice for sample-efficient RL. One candidate assumption is to assume that at each state, the optimal action yields significantly more value than the next-best action:

Assumption 2 (Large suboptimality gap): Assume for all

, we have

Perhaps surprisingly, the following theorem shows that an exponential lower bound for online RL remains under both Assumption 1 and Assumption 2.

Theorem 2 (Wang, Wang, Kakade 2021): There exists an MDP and a representation

satisfying both Assumption 1 and Assumption 2, such that any online RL algorithm (with knowledge of

) requires

samples to output the value

up to constant additive error, with probability at least 0.9.

Remark: We note a subtle distinction between the online RL setting and the simulator access setting. In the online RL setting, during each episode, we start at some state , and the subsequent states we see are entirely dependent on the policy we choose and the environment dynamics. Meanwhile, in the simulator access setting, at each time-step, we are free to input any

pair, and the simulator will return the next state

, as well as the reward

. While Theorem 1 (when only Assumption 1 is satisfied) holds for both online RL and simulator access, Theorem 2 (when both Assumption 1 and Assumption 2 hold) is valid only in the online RL setting. In the simulator access setting, Du et al. 2019 proved that there exists a sample-efficient approach (i.e. polynomial in all relevant problem parameters) to find an

-optimal policy, when both Assumption 1 and Assumption 2 hold. This demonstrates an exponential separation between the online RL and simulator access settings.

We next introduce the counterexample used to prove Theorem 2 in detail.

Construction sketch for counterexample in Theorem 2

Above, we have a pictorial representation of the MDP family in the counterexample. We first describe its state and action spaces.

- The state space is

. We use

to denote an integer, which we set to be approximately

.

- State

is a special state, which we can think of as a “terminal state”.

- At state

, the feasible action set is

. At state

, the feasible action set is

. Hence, there are

feasible actions at each state.

- Each MDP in this “hard” family is specified by an index

and denoted by

.

Before we proceed, we first recall the Johnson-Lindenstrauss lemma, which states that a set of points in a high-dimensional space can be embedded into a space of much lower dimension in such a way that the distances between the points are nearly preserved.

Johnson-Lindenstrauss Lemma: Suppose we are given

, a set

of

points in

, and a number

. Then, there is a linear map

such that

Consider a collection of

orthogonal unit vectors in the high-dimensional space

. For any two vectors

, after applying the linear embedding

, we observe by Johnson-Lindenstrauss that

where we used the fact that holds for any unit

by linearity of

. Hence, we can apply Johnson-Lindenstrauss to derive the following lemma, which will be useful in our construction.

Lemma 1 (Johnson-Lindenstrauss): For any

, there exists

unit vectors

in

such that

and

,

.

Throughout our discussion, we will set .

Equipped with the lemma above, we can now describe the transitions, features and rewards of the constructed MDP family. In the sequel, and

represent integers associated with the state and action respectively.

Transitions: The initial state follows the uniform distribution

. The transition probabilities are set as follows:

After taking action , the next state is either

or

. We might observe then that this MDP resembles a “leaking complete graph”. It is possible to visit any other state (except for

). However, importantly, there is at least

probability of going to the terminal state

. Also, observe that the transition probabilities are indeed valid, since by Lemma 1 above,

Features: The feature map, which maps state-action pairs to -dimensional vectors, is defined as

Note that the feature map is independent of and is shared across the MDP family.

Rewards: For , the rewards are defined as

For , we set

for every state-action pair.

We now verify that our construction satisfies both the linear realizability and large suboptimality gap assumptions (Assumption 1 and Assumption 2).

Lemma (Linear realizability). For all

, we have

.

Proof: Throughout, we assume that . We first verify the statement for the terminal state

. At the state

, regardless of the action taken, the next state is always

and the reward is always 0. Hence,

for all

. Thus,

. We next verify realizability for other states via backwards induction on

. The inductive hypothesis is

,

and that ,

When , (1) holds by the definition of the rewards at that level. Next, note that

, (2) follows from (1). This is because for

,

while (recall )

This means that proving (1) suffices to show that is always the optimal action. A simple verification via Bellman’s optimality equation suffices to prove the inductive hypothesis for (1) for every

, since the base case

holds. Thus, both (1) and (2) hold for all

, concluding our proof.

We next show that the constant suboptimality gap (Assumption 2) is also (approximately) satisfied by our constructed MDP family.

Lemma (Suboptimality gap). For all state

, and

, the suboptimality gap is

Hence, in this MDP, Assumption 2 is satisfied with.

Remark. Note that that here we ignored the terminal state and the essentially unreachable state

for simplicity. This seems reasonable intuitively, since reaching

is effectively the end of the episode, and the state

can only be reached with negligible probability(recall that

is exponentially large). For a more rigorous treatment of this issue, refer to Appendix B in Wang, Wang, Kakade 2021.

We can now state and prove the following key technical lemma, which directly implies Theorem 2 in Wang, Wang, Kakade 2021.

Lemma. For any algorithm, there exists

such that in order to output

with

with probability at least 0.1 for, the number of samples required is

.

Proof sketch. We take an information-theoretic perspective. Observe that the feature map of does not depend on

, and that for

and

, the reward

also has no information about

. The transition probabilities are also independent of

, unless the action

is taken, and the reward at state

is always 0. Thus, to receive information about the optimal action

, the agent either needs to take the action

, or be a non-game-over state at the final time step

(i.e

.)

However, by the design of the transition probabilities, the probability of remaining at a non-game-over state at the next time step is at most

Hence, for any algorithm, , which is exponentially small.

Summarizing, any algorithm that does not know either needs to “get lucky” so that

, or take the optimal action

. For each episode, the first event happens with probability less than

, and the second event happens with probability less than

. Since the number of actions is

, it follows that neither event can happen with constant probability unless the number of episodes is exponential in

. This wraps up the sketch.

We note that our construction is not quite rigorous due to the remark earlier that the suboptimality gap assumption does not hold for the states and

. A more rigorous construction can be found in Appendix B of Wang, Wang, Kakade 2021. Note that this lower bound is silent on the dependence of the sample complexity on the size of the action space, giving rise to the following question, which appears to be still unsolved.

Open problem: Could we get a lower bound that also depends on the action space dimension

, such that the number of samples required to obtain an approximately optimal policy scales with

Interlude: do these lower bounds matter in practice?

A natural question to ask is this: are these exponential lower bounds in Part 2 (when we only assume linear realizability) actually relevant for practice?

To answer this, we take a brief detour into offline RL. In offline RL (see Levine et al. 2020 for a survey), we assume that the agent has no direct access to the MDP, and is instead provided with a static dataset of transitions, (

denotes number of independent episodes in the offline data). The goal here could be to learn a policy

(based on the static dataset

) that attains the largest possible cumulative reward when applied to the MDP, or to evaluate the performance of some target policy

based on the offline data. We use

to denote the distribution over states and actions in

, such that we assume the state-action tuples

are sampled according to

, and the actions are sampled according to the behaviour policy, such that

.

Analogous to the online RL lower bound, the following theorem shows that linear realizability is also insufficient for sample-efficient evaluation of a target policy using offline data.

Theorem (informal, from Wang, Foster, Kakade 2020)). In the offline RL setting, suppose the data distributions have (polynomially) lower bounded eigenvalues, and the

-functions of every policy are linear with respect to a given feature mapping. Then, any algorithm requires an exponential number of samples in the horizon

to output a non-trivially accurate estimate of the value of any given policy

, with constant probability.

Some remarks are in order. First, note that the above hardness result for policy evaluation also holds for finding near-optimal policies using offline data. For a simple reduction, consider an example where at the initial state, one action leads to a fixed reward and another action

transits us to an instance which is hard to evaluate using offline data. Then, in order to find a good policy, it is necessary for the agent to approximately evaluate the value of the optimal policy in the hard instance. Second, an appropriate eigenvalue lower bound on the offline data distribution ensures that there is sufficient feature coverage in the dataset, without which linear realizability alone is clearly insufficient for sample-efficient estimation. Third, note that the representation condition in the theorem is significantly stronger than assuming than assuming realizability with regards to only a single target policy, and so the result carries over to the latter setting as well. Fourth, the key idea to prove the result is the error amplification (exponential in the horizon

) induced by the distribution shift from the offline policy to the target policy we wish to evaluate.

Empirical work performed in Wang et al. 2021 show that the these negative results do manifest themselves in experimental examples. The methology considered by Wang et al. 2021 is as follows:

- Decide on a target policy to be evaluated, along with a good feature mapping for this policy (could be the last layer of a deep neural network trained to evaluate the policy).

- Collect offline data using trajectories that are a mixture of the target policy and another distribution (perhaps generated by a random policy).

- Run offline RL methods to evaluate the target policy using feature mapping found in Step 1 and the offline data obtained in Step 2.

We note that features extracted from pre-trained deep neural networks should be able to satisfy the linear realizibility assumption approximately (for the target policy). Moreover, the offline dataset is relatively favorable for evaluation of the target policy, since we would not expect realistic offline datasets to have a large number of trajectories from the target policy itself.

However, numerical results show substantial degradation in the accuracy of policy evaluation, even for a relatively mild distribution shift (e.g. where there is a 50/50 split in target policy and random policy in the offline data). As an example, consider the following plot.

The figure above depicts the performance of Fitted Q-Iteration (FQI) on Walker2d-v2, an environment from the OpenAI gym benchmark suite which has continuous action space. Here, the -axis is the number of rounds of FQI used, and the

-axis is the square root of the mean squared error of the predicted values (smaller is better). The blue line corresponds to performance when the dataset is generated by the target policy itself with 1 million samples, and other lines correspond to the performance when adding more offline data induced by random trajectories. As we can see, adding more random trajectories lead to significant degradation of FQI. See Wang et al. 2021 for more such experiments.

These empirical results seem to affirm the hardness results in Wang et al. 2021 (offline RL) and Wang, Wang, Kakade 2021 (online RL), in that the definition of a good representation in RL is more subtle than in supervised learning, and certainly goes beyond just linear realizibility.

Part 3: Sufficient conditions for provable generalization in RL

We have seen from Part 1 and Part 2 that finding an -optimal policy with mild (e.g. logarithmic) dependence on

and

samples is NOT possible agnostically, or even with linearly realizable

. This leads us to the following question.

Q: What kind of assumptions enable provable generalization in RL?

In fact, under various stronger assumptions, sample-efficient generalization is possible in many special cases. Amongst others, these include

- Linear Bellman Completion [Munos 2005, Zanette et al. 2020]

- Linear MDPs (low-rank transition matrix) [Wang and Yang 2018; Jin et al. 2019]

- Linear Quadratic Regulators (LQR): standard control theory model (see e.g. Wikipedia page for LQR)

- FLAMBE/Feature Selection: Agarwal, Kakade, Krishnamurthy, Sun 2020

- Linear Mixture MDPs: [Modi et al. 2020, Ayoub et al. 2020]

- Block MDPs Du et al. 2019

- Factored MDPs Sun et al 2019

- Kernelized Nonlinear Regulator Kakade et al. 2020

What structural commonalities are shared between these underlying assumptions and models? To answer this question, we go back to the start, and revisit the case of linear bandits ( RL problem) for intuition.

Intuition from properties satisfied by linear bandits

We consider linear contextual bandits, where the context is , the action is

and

denote the state (or context) and action space respectively. We assume as before that associated with each state-action pair is a representation

. The observed reward is

, where

is a mean-zero stochastic noise term. The hypothesis class is

where is a subset of

. We let

denote the greedy policy for

, i.e.

An important structural property satisfied by linear contextual bandits is the following: data reuse. Indeed, the difference between any and the observed reward

is estimable when we had in fact played

for some hypothesis

. Via direct calculation, we see that

Intuitively, assuming that induces “sufficient exploration” of the

-dimensional representation space, this implies that we can evaluate the quality of any policy/hypothesis

using just the one set of data collected by

. This is precisely the kind of data reuse property we saw for supervised learning, which enables sample-efficient generalization there. This suggests that to ensure sample-efficient generalization in general RL, it may be fruitful to look for assumptions that enable data reuse. One special case where such data reuse is possible is the class of linear Bellman complete models.

Special case: linear Bellman complete class

Let be the length of each episode as before. We recall that a hypothesis class

is realizable for an MDP

if there exists a hypothesis

such that

where is the optimal state-action value at time step

. Having defined realizability, we are now ready to define the notion of linear Bellman completeness.

For any , let

be the greedy policy associated with

. By the definition of a linear Bellman complete class, it follows that given some fixed

, for any

, we have

Definition (linear Bellman complete). A hypothesis class

, with respect to some known feature

, is linear Bellman complete for an MDP

if

is realizable and there exists

such that for all

and

,

This shows that data reuse is possible for any linear Bellman complete class , since any

can be evaluated using offline data collected by some fixed policy

.

As an aside, note that linear Bellman completeness is a very strong condition that can break when new features are added. This is because adding new features expands the hypothesis space (of linear functions), and there is no guarantee that the new hypothesis class will again satisfy linear Bellman completeness.

It turns out that linear Bellman complete classes are just one example of Bilinear Classes (Du et al. 2021), which encompass many RL models in which sample-efficient generalization has been shown to be possible.

Bilinear Classes: structural properties to enable generalization in RL

We assume access to a hypothesis class , which can be abstract sets that permit for both model-based and value-based hypotheses. We assume that for all

, there is an associated state-action value function

and a value function

for each

. As before, let

denote the greedy policy with respect to

, and let

denote

. We can now introduce the Bilinear Class.

Definition (Bilinear Class). Consider an MDP , a hypothesis class

, a discrepancy function

(defined for each

). Suppose

is realizable in

and that there exists functions

and

for some

. Then,

forms a Bilinear Class for

if the following two conditions hold.

- Bilinear regret: on-policy difference between claimed reward and true reward satisfies following upper bound,

- Data reuse:

As an example to demonstrate what the choices of and

might look like, for a linear Bellman complete class

, we can choose

Above, note that for all

for linear Bellman complete classes, and that the discrepancy function

in this case does not depend on

. As demonstrated in Du et al. 2021, the following models (in which sample-efficient generalization is known to be possible) can all be shown to be Bilinear Classes for some discrepancy function

:

- Linear Bellman Completion [Munos 2005, Zanette et al. 2020]

- Linear MDPs (low-rank transition matrix) [Wang and Yang 2018; Jin et al. 2019]

- Linear Quadratic Regulators (LQR): standard control theory model (see e.g. Wikipedia page for LQR)

- FLAMBE/Feature Selection: Agarwal, Kakade, Krishnamurthy, Sun 2020

- Linear Mixture MDPs: [Modi et al. 2020, Ayoub et al. 2020]

- Block MDPs Du et al. 2019

- Factored MDPs Sun et al 2019

- Kernelized Nonlinear Regulator Kakade et al. 2020

- and more (see Du et al. 2021 for details.)

Bilinear classes can be seen as a generalization of Bellman rank (Jiang et al. 2017) and Witness rank (Wen et al. 2019), which were previous works that sought to identify strucural commonalities between different RL models that enable sample-efficient generalization. That being said, there are still models (with known provable generalization) which Bilinear Classes does not cover. Two such exceptions are the deterministic linear (Wen and Van Roy 2013) model and the

-state aggregation model (Dong et al. 2020). On a heuristic level, the structural commonalities identified by the Bilinear Classes show that to a large extent, most RL models known to enable sample-efficient generalization resemble linear bandits, in that data reuse is possible. In this sense, understanding why generalization is possible in the linear bandit case gives one intuition for why generalization is possible in these other cases as well. On some level, this may be disappointing since we might hope to capture richer phenomenon than just linear bandits, but promisingly, there is a rich class of RL models which share these structural commonalities that enable generalization in RL (as the examples encompassed by the Bilinear Classes demonstrate).

Conclusion

From the discussion above, we see that a generalization theory for RL, while significantly distinct from that for supervised learning, is still possible. However, natural assumptions that might seem adequate, such as linear realizability, are in fact insufficient, and much stronger assumptions are required. One such example of sufficient assumptions is the Bilinear Class, which covers a rich set of models. Moreover, as the empirical results we saw in the interlude show, these representational issues identified by theory are relevant for practice. For more on the theory of RL, see the following forthcoming book.