This is a linkpost for my Harvard Crimson op-ed for its commencement issue. I will not reproduce the whole text here, but my advice to the class of 2026 is in the following parts: My advice for the Class of 2026 is to embrace AI as a technology, but treat it critically as citizens....Throughout your … Continue reading AI is a Meteor. Don’t be a Dinosaur.

The state of AI safety in four fake graphs

Here is a quick overview of my intuitions on where we are with AI safety in early 2026: So far, we continue to see exponential improvements in capabilities. This is most visible in the famous “METR graph”, but the trend is clear in many other metrics, including revenue. If you squint, you can even see … Continue reading The state of AI safety in four fake graphs

Mass surveillance, red lines, and a crazy weekend

[These are my own opinions, and not representing OpenAI. Crossposted on Lesswrong] AI has so many applications, and AI companies have limited resources and attention span. Hence if it was up to me, I’d prefer we focus on applications that are purely beneficial— science, healthcare, education — or even commercial, before working on anything related … Continue reading Mass surveillance, red lines, and a crazy weekend

Trevisan Award for Expository Work

[Guest post by Salil Vadhan; Boaz's note: I am thrilled to see this award. Luca's expository writing on his blog, surveys, and lecture notes was and is an amazing resource. Luca had a knack for explaining some of the most difficult topics in intuitive ways that cut to their heart. I hope it will inspire … Continue reading Trevisan Award for Expository Work

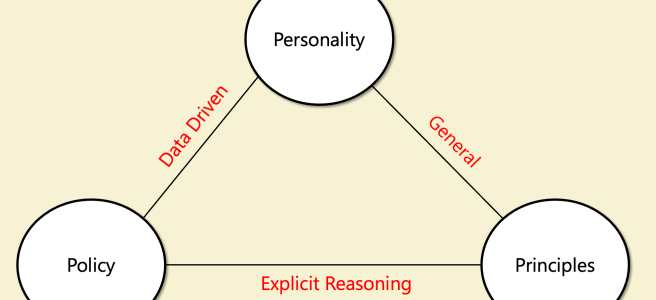

Thoughts on Claude’s Constitution

[I work on the alignment team at OpenAI. However, these are my personal thoughts, and do not reflect those of OpenAI. Crossposted on LessWrong] I have read with great interest Claude’s new constitution. It is a remarkable document which I recommend reading. It seems natural to compare this constitution to OpenAI’s Model Spec, but while the documents … Continue reading Thoughts on Claude’s Constitution

TheoryFest 2026 Call for Workshops (guest post by Mary Wooters)

TheoryFest 2026 will hold workshops during the STOC 2026 conference week, June 22–26, 2026, in Salt Lake City, Utah, USA. We invite groups of interested researchers to submit workshop proposals! See here for more details: https://acm-stoc.org/stoc2026/callforworkshops.html Submission deadline: March 6, 2026

Thoughts by a non-economist on AI and economics

Crossposted on lesswrong Modern humans first emerged about 100,000 years ago. For the next 99,800 years or so, nothing happened. Well, not quite nothing. There were wars, political intrigue, the invention of agriculture -- but none of that stuff had much effect on the quality of people's lives. Almost everyone lived on the modern equivalent … Continue reading Thoughts by a non-economist on AI and economics

CS 2881: AI Safety

The webpage for my AI safety course is on https://boazbk.github.io/mltheoryseminar/ including homework zero, video of first lecture and slides. Future blog posts related to this course will be posted on lesswrong since many people interested in AI safety visit that. Video of first lecture https://youtu.be/-NCiWaRS6So Some snapshots from slides:

AI Safety Course Intro Blog

I am teaching CS 2881: AI Safety this fall at Harvard. This blog is primarily aimed at students at Harvard or MIT (where we have a cross-registering agreement) who are considering taking the course. However, it may be of interest to others as well. For more of my thoughts on AI safety, see the blogs … Continue reading AI Safety Course Intro Blog

Machines of Faithful Obedience

[Crossposted on LessWrong] Throughout history, technological and scientific advances have had both good and ill effects, but their overall impact has been overwhelmingly positive. Thanks to scientific progress, most people on earth live longer, healthier, and better than they did centuries or even decades ago. I believe that AI (including AGI and ASI) can do … Continue reading Machines of Faithful Obedience