(Edit: scribe notes on my lectures on this topic are now up.)

Subhash Khot, Dor Minzer and Muli Safra just posted an exciting manuscript online. In it, they confirm the combinatorial hypothesis I’ve posted about before on the structure of non-expanding set in the degree two short-code graph (or, equivalently, in the Grassman graph). Together with their prior work with Dinur and Kindler, and a small assist of an expansion-to-testing reduction of Kothari, Steurer and I, this establishes (an imperfect completeness variant of) Khot’s two to one conjecture and the intermediate complexity conjecture. This also makes significant progress towards proving, or at least giving evidence for, the more famous unique games conjecture (UGC). In fact, as I explain below, there is a technical sense in which it goes “halfway” towards proving the UGC.

The Unique Games () problem with parameters

is the following: given a set of linear equations each involving at most two variables over some finite field, to distinguish between the completeness case where there exists an assignment to the variables satisfying at least

fraction of the equations, and the soundness case where every assignment satisfies fewer than a

fraction.

Clearly the problem becomes easier the larger the gap between

and

. The unique games conjecture is that the problem is as hard as it can be, in the sense that it is NP hard for

arbitrarily close to one and

arbitrarily close to zero (as a function of the field size which we assume tends to infinity in what follows). In other words, the difficulty of

as a function of

and

is conjectured by the UGC to look like this:

(Note that the problem does not make sense when  . Also, in this figure we ignore regions of measure zero. In particular for

. Also, in this figure we ignore regions of measure zero. In particular for  or

or  the problem can be solved by a semidefinite program where the precise

the problem can be solved by a semidefinite program where the precise  factors depend on the field size; it is known that if the UGC is true then this dependence is optimal.)

factors depend on the field size; it is known that if the UGC is true then this dependence is optimal.)

Until today, what was known about unique games could be summarized in this (not to scale) figure:

That is, when and

are sufficiently close to each other,

was known to be NP hard (see for example this paper) and in fact with a linear-blowup reduction establishing exponential hardness (i.e.

under the exponential time hypothesis. On the other hand, when either the completeness parameter is sufficiently close to one or the soundness is sufficiently close to zero, there was a known subexponential time algorithm of Arora, Steurer and I (see also here) for

. (That is, an algorithm running in time

for some

which tends to zero as either completeness tends to one or soundness tends to zero.)

However, that algorithm was of course only an upper bound, and we did not know whether it could be improved further to or even polynomial time. Moreover, its mere existence showed that in some sense the techniques of the previously known NP hardness results for

(which used a linear blow up reduction) are inherently inapplicable to establishing the UGC which requires hardness in a completely different regime.

The new result of Khot, Minzer and Safra (when combined with the prior ones) shows that is NP hard for

arbitrarily close to half and

arbitrarily close to zero. (The result is presented as hardness of 2 to 2 games with completeness close to one, but immediately implies hardness of unique games with completeness close to half.) That is, the new picture of unique games’ complexity is as follows:

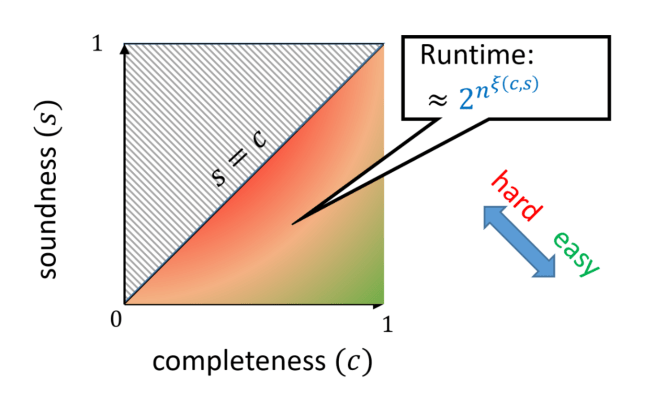

This establishes for the first time hardness of unique games in the regime for which a sub-exponential time algorithm was known, and hence (necessarily) uses a reduction with some (large) polynomial blowup. While it is theoretically still possible for the unique games conjecture to be false (as I personally believed would be the case until this latest sequence of results) the most likely scenario is now that the UGC is true, and the complexity of the problem looks something like the following:

That is, for every , the best running time is roughly

where

is a function that is always positive but tends to zero as

tends to one or

tends to zero (and achieves the value one in a positive measure region of the plane). Of course we are still yet far from proving this, let alone characterizing this function, but this is still very exciting progress nonetheless.

I personally am also deeply interested in the question of whether the algorithm that captures this curve is the sum of squares algorithm. Since SoS does capture the known subexponential algorithms for unique games, the new work provides more evidence that this is the case.

Picture indicates that it only goes 1/4 of the way toward proving the UGC.

🙂