One of the mysteries of computation is that, as far as we can tell, the time complexity of many natural computational problems is either (with a small exponent, such as

or

) or at least

(with a not too small coefficient in the exponent). This is perhaps the main reason why we are excited when people discover an

(or even

) time algorithm for an important problem X: we view this as evidence that X is of the EASY type, and the exponent is likely to be improved over time (as indeed most often happens).

Similarly, this is why we care about hardness reductions, even when they hide huge constants or exponents: we view them as evidence that a problem is of the HARD type, and expect (as is also often the case) the efficiency of the reduction to be eventually improved.

This is also why our asymptotic theory of efficiency in informative in a finite world, and we don’t spend too much time worrying about the fact that for every input length

that could possibly fit in the world’s storage capacity.

Of course there are results such as the time hierarchy theorem and Ladner’s theorem that tell us that “intermediate complexity” do exist, but the hope is that this doesn’t happen for “natural” problems.

(In particular, we can artificially create problems of complexity or

by padding exponentially hard problems. This is one of the reasons why Impagliazzo Paturi and Zane argued that for NP problems, the parameter

should be thought of the solution size and not the input size.)

One family of natural problems are constraint satisfaction problems (CSPs). For some finite alphabet and constant

, a CSP

is characterized by a set

of predicates from

.

The input for is a collection of functions

, each of which applies some predicate in

to a

-subset of its coordinates.

The basic task in CSPs is exact satisfiability, where the goal is to tell whether there is some assignment for the inputs that such that

.

The celebrated CSP dichotomy conjecture (which perhaps should now be called a theorem) implies (in its modern, algebraic form) that for every , either there is a polynomial-time (in fact I believe that at most

time) algorithm for the exact satisfiability of

or there is a constant-factor blowup reduction from 3SAT to

, implying that (under the exponential-time hypothesis or ETH) its complexity is

.

The approximation problem for is parameterized by two numbers

(known as the soundness and completeness paramters) and asks to distinguish, given an input

of

, between the case that there is some assignment

such that

, and the case that

for every

. There is one sense in which understanding the complexity of the approximation problem is easier, since in the context of approximation, the pesky difference between 3SAT and 3XOR (bane of many failed NP vs P proofs, and the main reason the dichotomy conjecture is so hard to prove) disappears. On the other hand, in this context a problem such as BIPARTITNESS (or

), which was in the EASY column of exactly solving, become the NP-hard problem of Max-Cut.

One of the most fascinating consequences of the Unique Games Conjecture is that, if it is true, then there are in fact CSPs for which their approximation is neither EASY nor HARD. That is, the Unique Games Conjecture (plus the ETH) implies the following conjecture:

Intermediate Complexity Conjecture: For every

, there exist some

,

and

such that

vs

approximation of

can be done in time

but (the potentially easier)

vs

approximation cannot be done in time

.

This is a consequence of the subexponential time algorithm for unique games, which in particular implies that for, say, , there is some

that tends to zero with

such that the

vs

problem for the unique games CSP can be solved in time

.

On the other hand, the Unique Games Conjecture implies that for every , no matter how close

is to zero, or

is to

, the

vs

problem for unique games is NP hard and hence (under the ETH) cannot be solved in time

.

I find this conjecture very fascinating. A priori, we might expect a zero one law for CSP’s complexity, where, depending on the approximation quality, one could either solve the problem in time or require at least

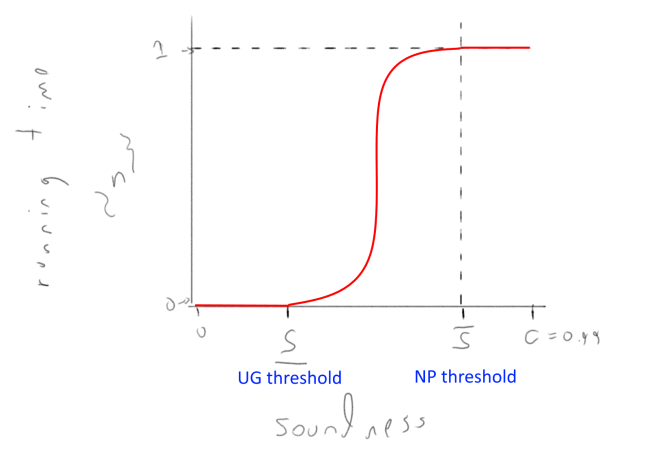

time. Indeed, we have good reasons to believe that predicates such as 3XOR and 3SAT satisfy such a zero/one law. But, if the intermediate complexity conjecture is true then for some predicates (such as unique games itself, and probably even max cut, which can hardly be called “unnatural”) we would get a non-trivial curve of the running time vs approximation quality, that looks something like this:

Figure 1: Conjectured time complexity of vs

approximation of unique games as a function of

assuming the unique games conjecture. The UGC characterizes the threshold

when the time complexity becomes

for some

, while there is also a (not yet fully determined) threshold

when there is a linear blowup reduction from 3SAT and hence (under the ETH) the complexity is

.

(One other setting where people were not sure if the complexity of some finitely specified object must be either exponential or polynomial is group theory, where one can define the complexity of a finitely generated group as the number of distinct elements that can be generated by a word of length $n$. There it turned out there are groups of “intermediate complexity”.)

What is also quite interesting is that we might be able to prove the intermediate complexity conjecture without resolving the UGC. In an exciting recent work, Dinur, Khot, Kindler, Minzer and Safra gave a combinatorial hypothesis that, if true, would imply the NP hardness of vs

approximation for unique games where

, and

can be arbitrarily close to

. Thus, if their hypothesis is true then, combined with the subexponential time algorithm mentioned above, it would imply the intermediate complexity conjecture. (If the construction is improved to obtain completeness

that is close to

then of course it would prove the unique games conjecture as well.)

Through discussions with Pravesh Kothari and David Steurer, we showed that the DKKMS combinatorial hypothesis is equivalent to a fairly simple to state statement. Informally, it can be stated as follows: let be the graph on

binary matrices, such that two matrices

have an edge if

has rank one over GF(2). (UGC/PCP experts might recognize this graph as the degree two short code graph.) This graph is not a small set expander in the sense that, if you think about it for few minutes, you see that there are sets

matrices of measure

such that if we pick a random

and a random neighbor

of

, then with constant probability

will also be in

. The DKKMS hypothesis is equivalent to the conjecture that these sets that you think about after few minutes encompass all such examples.

Let me state this more formally: Lets say that a subset is a basic set if

or

where

are column vectors. Note that each such set has measure

among all matrices and that a random neighbor of an element in

will be in

with probability

. For every constant

, you can clearly construct a set where a random neighbor stays inside with probability

by intersecting

basic sets. You can also keep this probability constant even if you just subsampled a constant fraction of this intersection (and you can add a few random vertices as well). The DKKMS hypothesis is equivalent to the statement that this is all you can do. That is, it is equivalent to the following statement:

Inverse short code conjecture: For every

there exists some

and

such that if

is large enough and

is the graph above with vertex set

and

if

, then for every

, if

satisfies

then there are

basic sets

such that

satisfies

.

I call this an “inverse conjecture” since it is aimed at characterizing the sets that have unusually small expansion in the short-code graphs in terms of their correlation with basic sets.

I find it a natural question, though have to admit that, despite thinking about it some time (and also talking about it, not just with David and Pravesh but also Irit Dinur, Shachar Lovett and Kaave Hoesseini) I still don’t have a strong intuition whether it’s false or true.

Given that the short code graph is a Cayley graph over the Boolean cube, this seems like a question that could be amenable to tools from Fourier analysis and additive combinatorics.

Some partial progress has been made by the DKKMS folks in their new manuscript.

In fact, the same observations show that their original hypothesis is also equivalent not just to the “inverse short code conjecture” but also to Hypothesis 1.7 in this report, which is an analogous “inverse conjecture” for the Grassmanian graph where, for , the vertices are dimension

subspaces of

, and two subspaces

are neighbors if their intersection has dimension

.

Nevertheless, at the moment this question is still wide open, and I hope this writeup encourages more people to work on it. Other than resolving this question, there are some other interesting research directions, including:

- The DKKMS reduction combined with the Grigoriev 3XOR instance yields a concrete candidate sum-of-squares integrality gap for, say,

vs

unique games. By construction, the SOS value of this instance is

, but is analyzing the soundness of this instance easier than the general case?

-

The underlying building block for DKKMS is a problem known as a smooth label cover. This is not precisely a CSP, but can we show that it has intermediate complexity? i.e., give a subexponential time algorithm for it? If the DKKMS hypothesis is correct then we know that such an algorithm should be an SDP hierarchy.

-

Suppose the combinatorial hypothesis is true, is there an inherent reason why its proof should not be a low degree SOS proof? After all, related isoperimetric results on the short code graph have been shown by low degree SOS proofs. Also, is the proof of the current best bounds coming from the DKKMS manuscript SOS-izable?

-

The quantiative bounds for the DKKMS reduction are terrible. I haven’t worked out all the details but it seems that the reduction from 3XOR to

vs

approximation of unique games would map an

variable instance to an instance of at least

(or maybe even

) size. Is this inherent?

-

Is there a general conjecture that would predict for

(say even if

is a single predicate) and

what should be the time complexity of

vs

approximation for it? We have some approaches of trying to predict when the complexity should be

by considering pairwise independent distributions. I feel like a conjecture trying to characterize what can be done in

time would have something to do with defining a suitable notion of “$(1+\epsilon)$-wise independent distributions” perhaps via concepts of mutual information. For example, perhaps we can argue that a smooth label cover is a

-wise independent CSP to a certain extent.

The graph G that you mention is the “bilinear graph”, connecting points at distance 1 in the bilinear association scheme (over GF(2)) with appropriate parameters. This algebraic structure has been studied by coding theorists such as Delsarte, and in particular its eigenstructure is well understood.

Thanks! I didn’t know about this name.

Yes, given that it’s a Cayley graph over the cube we know all its eigenvalues and eigenvectors. The question is however whether the eigenvectors corresponding to non-trivial eigenvalues (which would correspond to the Fourier characters of matrices of constant rank) can span functions that are close to the characteristic function of a set that’s not close to a union of intersection of a constant number of those “basic sets”.