Cross-posted from https://wsmoses.com/blog/2018/12/18/boaz/

Lecturer: Aram Harrow

Scribes: Sinho Chewi, William S. Moses, Tasha Schoenstein, Ary Swaminathan

November 9, 2018

Outline

Sampling from thermal states was one of the first and (initially) most important uses of computers. In this blog post, we will discuss both classical and quantum Gibbs distributions, also known as thermal equilibrium states. We will then discuss Markov chains that have Gibbs distributions as stationary distributions. This leads into a discussion of the equivalence of mixing in time (i.e. the Markov chain quickly equilibrates over time) and mixing in space (i.e. sites that are far apart have small correlation). For the classical case, this equivalence is known. After discussing what is known classically, we will discuss difficulties that arise in the quantum case, including (approximate) Quantum Markov states and the equivalence of mixing in the quantum case.

Gibbs distributions

We have already learned about phase transitions in a previous blog post, but they are important, so we will review them again. The Gibbs or thermal distribution is defined as follows: Suppose that we have an energy function , which takes

-bit strings to real numbers. Usually,

, where each

term depends only on a few bits. For example, the energy might be the number of unsatisfied clauses in a 3-SAT formula, or it may arise from the Ising model. The Gibbs distribution is

where the normalization factor in the denominator, also called the partition function, is . Another, perhaps more operational, way to define the Gibbs distribution is:

In this expression, is the set of probability distributions on

,

is the Shannon entropy, and

is a constant representing the average energy. We are thinking of probability distributions and

as vectors of size

. It turns out that if we solve this optimization problem, then the Gibbs distribution is the unique solution.

Uses of Gibbs distributions

Why is it useful to work with Gibbs distributions?

Gibbs distributions arise naturally in statistical physics systems, such as constraint satisfaction problems (CSPs), the Ising model, and spin glasses. One approach to deal with Gibbs distributions is through belief propagation (BP), which yields exact inference on tree graphical models and sometimes phase transition predictions on loopy graphs. Instead, we will focus on a different approach, namely, sampling from the Gibbs distribution.

If we want to minimize

(say, to find a 3-SAT solution), we can use simulated annealing. The idea of annealing is that we want to produce a crystal; a crystal is the lowest energy configuration of molecules. If we heat up the substance to a liquid and then cool it quickly, we will not get a nice crystal, because little bits of the material will point in different directions. In order to form a crystal, we need to cool the system slowly.

In computer science terms, we take a sample from a high temperature because sampling is generally easier at a higher temperature than at a lower temperature. We then use that sample as the starting point for an equilibration process at a slightly lower temperature, and repeat this procedure. If we reach zero temperature, then we are sampling from the minimizers of

. In practice, the system will usually stop mixing before we get to zero temperature, but this is a good heuristic. You can think of this process as gradient descent, with some additional randomness.

Gibbs distributions are used to simulate physical systems.

Gibbs distributions are used in Bayesian inference due to the Hammersley-Clifford theorem, which will be discussed next.

Gibbs distributions are also connected to multiplicative weights for linear programming (not discussed in this blog post).

Bayesian inference & the Hammersley-Clifford theorem

In order to present the Hammersley-Clifford theorem, we must first discuss Markov networks. For this part, we will generalize our setup to a finite alphabet , so the energy function is now a function

.

Markov chains

First, let us recall the idea of a Markov chain with variables ,

,

.

The random variables ,

,

form a Markov chain if their joint distribution can be written in a factored way:

. For example, imagine that

,

,

represent the weather on Monday, Tuesday, and Wednesday respectively. These random variables form a Markov chain if, conditioned on the weather on Tuesday, we have all of the information we need to forecast the weather on Wednesday. Another way to say this is that conditioned on the weather on Tuesday, then the weather on Monday and the weather on Wednesday are conditionally independent. Note that the weather on Monday and the weather on Wednesday are not independent; there can be correlations, but these correlations are mediated through the weather on Tuesday. It is important to note that the definition of a Markov chain is symmetric with respect to going forwards or backwards in time, so we can also write the conditional independence condition as

.

The conditional independence condition can also be written as Recall that for two random variables

and

with joint distribution

, they are independent, i.e.,

, if and only if

, where

here denotes the mutual information. Similarly, conditional independence is equivalent to the conditional mutual information

equaling zero. This quantity is defined as

.

Keep in mind that conditional independence is characterized in two equivalent ways: via an algebraic condition on the distributions, and via mutual information.

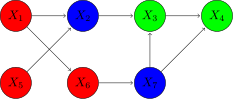

Markov networks

A Markov network is like a Markov chain, but with more random variables and a more interesting structure. Imagine that we have a graph, where each node is associated with a random variable and the edges encode possible correlations. A Markov network has the property that if we take any disjoint collection of nodes ,

, and

such that

and

are fully separated by

(that is, any path from

to

must go through

, or alternatively, removing

leaves

and

disconnected), then

. The notation

here means the collection of random variables associated with the nodes in

.

For example:

Here, if ,

, and

, then

separates

and

.

A Markov network is also called a graphical model or a Markov random field; and yet another name for them is Gibbs distribution, which is the content of the following theorem:

Theorem 1 (Hammersley-Clifford Theorem): Let be a strictly positive distribution on

. Then,

can be represented as a Markov network with respect to a graph

if and only if

can be expressed as a Gibbs distribution

, where

is the set of cliques (fully connected subsets) of

.

This theorem says that Markov networks are the same as Gibbs states, with the same notion of locality.

The Hammersley-Clifford theorem implies an area law for mutual information; we will explain what this is and sketch why this is true. Divide a system into two disjoint pieces and

. We want to know about the mutual information between

and

,

. The Hammersley-Clifford theorem gives us a bound which depends only on the size of the boundary

between these sets. For simplicity, assume

. Also, assume that the interactions have bounded range; then, the Hammersley-Clifford theorem tells us that

.

Now, we will use the fact . We can see this by writing out the expressions, but the intuition is that the term on the left asks about how much

knows about

, having already known about

. This equals how much

knows about

and

combined, minus how much

knows about

alone. In this case, since we said

, then

is the same as

. In general, however, we have an upper bound:

In this calculation, we have used (the information between

and

is the amount by which the entropy of

gets reduced once we know

) and

(which is true classically).

Since the mutual information only scales with the surface area of the boundary and not with the area of the two regions and

, this is known as an area law [1].

Relationship to Bayesian inference

In Bayesian inference, we have a model for a system which can be very complicated. The model represents our assumptions on how parts of the system are causally related to the rest of the system. We have some observations, and we want to sample from a distribution conditionally on the fixed observations. Sampling from a conditional distribution is not the same as sampling from the original distribution, but we can still formally represent the conditional distribution as a Markov network. Therefore, sampling from Markov networks is a broadly useful task.

As an example of a complicated Bayesian model, consider a hierarchical Bayesian model [2]. Bayesian statistics requires choosing a prior distribution, and when there is a natural parameterized family of priors that a statistician can use, it may make sense to introduce a distribution over the priors; this is known as introducing a hyperparameter, and inference in the resulting hierarchical model (including computation of the posterior distribution) is frequently intractable. However, it is still desirable to work with these models because they are often more accurate than models in which the prior is handpicked by a statistician.

Sampling from Gibbs distributions

The task of sampling from an arbitrary Gibbs distribution is MA-complete [3], and it is not hard to see that at low enough temperatures this problem is at least NP-hard. So, how do we sample from these distributions?

This section will discuss Monte Carlo Markov chain (MCMC) methods, namely the Metropolis-Hastings algorithm and Glauber dynamics. Readers familiar with these methods may wish to skip to the discussion of mixing in time. For readers who wish to build more intuition about Markov chains before proceeding, see the Appendix, where the simple example of the random walk on a cycle is treated in detail.

Monte Carlo Markov chain (MCMC) methods

The general approach is to use a Markov chain. Let be the possible states of the system. Effectively, a Markov chain is a way of doing a random walk over

.

The transition probabilities of the Markov chain are1 Here,

is the transition probability matrix. The column at index

of

is the probability distribution of the next state of the Markov chain, if the current state is

. The row at index

is a row of probability values which give the probabilities of jumping into state

from every other state. It has the properties that its entries are non-negative and for every

,

. These properties say that

is a (column) stochastic matrix.

Suppose we start at a state ; or, more generally, we will start with a distribution

over

. If we move according to the chain once, the distribution will be

. If we move agian, the distribution will be

. In general, after

movements, the distribution is

. So, we can express the dynamics of the chain as matrix-vector multiplication.

It is worth mentioning that if we are simulating the chain on a computer and we are manipulating -bit numbers, then these probability vectors are of size

so it becomes impractical to store the entire probability distributions.

The justification for our algorithms is the following theorem.

Theorem 2 (Perron-Frobenius Theorem): If is a stochastic aperiodic matrix, then one of the eigenvalues is

, and all other eigenvalues have magnitude strictly less than

. There is a unique probability distribution

such that

.

The theorem implies that will converge to the stationary distribution

as

. So, if we want to sample from a distribution, this provides a method of doing so: cook up a Markov chain that equilibrates to the desired distribution, and then run the Markov chain until convergence. A priori, it is not obvious how we can design the Markov chain. At first, our problem was to sample from a probability distribution (a vector), and now we have changed the problem to designing an entire matrix, which does not appear to make our task easier.

Now, the question becomes: how does one come up with Markov chains that give you the desired stationary distribution?

Metropolis-Hastings algorithm

The first algorithm we will introduce is the Metropolis-Hastings algorithm. One more desirable feature of a Markov chain is that it satisfies detailed balance, which says for all

and

. This condition says that if we pick a point with probability according to the stationary distribution and transition, the probability of picking

and then moving to

should be the same as picking

and then moving to

.

For a Markov chain in equilibrium, the total amount of probability flowing out of must equal the total amount of probability flowing into

. For example, the United States might export products to Europe and import from China. Detailed balance says that the flow along each edge must balance, which is a more demanding condition. In the example with country trade deficits, we are requiring that all bilateral trade deficits must be zero.

Mathematically, detailed balance implies that can be transformed, via similarity transformations, into a symmetric matrix. The Metropolis-Hastings algorithm says that we should choose

with the property

Suppose that we have an underlying graph on our state space, and suppose that we are at a state

. The algorithm chooses a random neighbor, say

, and then accepts or rejects this move with some probability. If the move is accepted, then we move to

and continue the algorithm from there. Otherwise, if the move is rejected, then we stay at

. We are free to choose any underlying graph (as long as it is connected and has a self-loop), and then we will tune the acceptance probability so that detailed balance holds.

Look at the trial move . One way we can accomplish detailed balance is by looking at the ratio

. If

, then always accept the move. If

, then accept the move with probability

.

To get an idea for how the algorithm works, suppose that our underlying graph is -regular. Then, for neighbors

and

,

Claim: which is manifestly symmetric in

and

; thus, we have reversibility. This is the basic idea of the Metropolis-Hastings algorithm.

How does it work for a Gibbs distribution , where the energy function might, for example, count the number of violated clauses in a 3-SAT formula? In this case, we might be a little worried. The numerator of

is pretty easy to compute (we can count how many violated constraints there are), but the denominator is hard to compute. In general, it is #P-hard to compute the denominator, because as

drops to

, the partition function in this case approaches the number of 3-SAT solutions. So, how do we calculate the ratios

that the algorithm requires? We’re able to do this because the ratio does not depend on

:

Suppose that the energy is a sum of local terms, and the underlying graph corresponds to modifying one site at at a time. What this means is that the graph is and the edges in the graph correspond to flipping exactly one bit. In this case, it becomes very easy to evaluate the computations needed for the algorithm; in fact, we can even do them in parallel.

How do we choose the underlying graph? The key idea is that we do not want the majority of our moves to be rejected. A good example to keep in mind is the Ising model, where the configurations are and the energy is

. If

for all

,

, then we say that the model is ferromagnetic (we obtain lower energy by making the sites agree with each other). Of course, an antiferromagnetic model is just the opposite of this.

Assume that the bits are laid out in a square and if

and

are neighbors on the square, and

if they are not. As we vary the quantity

, we observe a phase transition. If

is small, then the coupling between the random variables is weak and the different parts of the system are almost independent; we call this the disordered phase. If

is large, then the spins want to align in the same direction and the Gibbs distribution will look almost like the following: with probability

, all spins are

, and with probability

, all spins are

; we call this the ordered phase.

In the disordered phase, when the spins do not need to align so closely, the Metropolis-Hastings algorithm will work well. In the ordered phase, the algorithm is doomed. Indeed, suppose that most of the spins are . As time proceeds, any

s will switch to

. There may be islands of

spins initially, but it will be energetically favorable for these islands to shrink over time. Therefore, there will be an exponentially small chance for the system to switch to a configuration with mostly

’s, and thus the chain takes exponentially long to mix. Here, people are interested in understanding the autocorrelation time, because the goal is to run the chain for some time, get one sample, run the chain for some more time, get another sample, etc.

Glauber dynamics

This next method (Glauber dynamics) is essentially the same as Metropolis-Hastings, but this is not immediately obvious. We are at a state . (For the Metropolis-Hastings algorithm, we could be walking on a state space without a product structure. However, Glauber dynamics requires a product structure.) Then, we update

to

with chance

. In other words, we hold all other bits fixed, and conditioned on those other bits, we resample the

th bit. Like Metropolis-Hastings,

is stationary for this chain.

It is not obvious that these conditional distributions can be computed efficiently, but it is possible since normalizing the conditional distribution only requires summing over the possible configurations for a single random variable. On a Markov network, the conditional probability is , where

denotes the set of neighbors of

. This makes the computation a constant-sized calculation (i.e., does not depend on the size of the system).

For example, in the Ising model, suppose we are at state . In Glauber dynamics, we pick a vertex

u.a.r. and update it to

with probability

Mixing in time

Mixing in time means that the dynamics will equilibrate rapidly. It turns out that this is equivalent to mixing in space, which means that itself has decaying correlations. For example, the Ising model at low temperature has a lot of long-range correlations, but at high temperature it does not. For the high temperature regime, we can prove that mixing in time occurs. We will prove this for the ferromagnetic Ising model. The result is known more generally, but the proofs are much easier for the Ising model.

People have known about the Metropolis-Hastings algorithm since the 1950s, but only recently have researchers been able to prove convergence guarantees for the 2D Ising model. There is a large gap between theory and practice, but in some situations we can prove that the algorithm works.

Sampling from the distribution is roughly equivalent to estimating the partition function (sampling-counting equivalence). There have been many papers addressing tasks such as estimating the non-negative permanent, the number of colorings of a graph, etc.2 A dominant way of accomplishing these tasks is proving that the Metropolis-Hastings algorithm converges for these problems. It is easy to find algorithms for these problems that converge to Gibbs distributions, but the convergence may take exponential time.

We will look at the situation when the energy function looks like the Ising model, in the sense that the interactions are local and reflect the structure of some underlying space. Also, assume that the interactions are of size and that the scaling comes from the size of the system. When can we expect that our algorithms work? There are two main cases when we can argue that there should be rapid mixing.

- High temperature regime: The system is very disordered, and in the limit as the temperature approaches infinity, we get the uniform distribution.

- One-dimension: In 1D, we can exactly compute the partition function using dynamic programming. Before, we mentioned that if there are a sea of

s and an island of

s, then it is energetically favorable for the island to shrink; note that this is no longer true in 1D. In a way, 1D systems are more “boring” because they cannot exhibit arbitrarily long-range correlations.

In this part of the blog post, we will try to be more proof-oriented. We will start by explaining why it is plausible that high temperature means that the chain will mix rapidly in time.

Coupling method

One method of proving rates of convergence for Markov chains is by analzying the spectral gap. Another method is the coupling method.

The idea behind the coupling method is to start with two configurations . We want each one to evolve under the Markov chain.

The key part is that there is still some freedom with respect to what the dynamics looks like. In particular, we are allowed to correlate the and

processes. Thus, we are defining a joint transition probability

. We want to design the process such that

and

are closer together than

and

. Imagine that we have two particles bouncing around. Each particle follows the dynamics of

, but they are correlated so that they drift together, and once they meet, they stick together. It turns out that the mixing time can be upper bounded by the time it takes for the particles to meet each other.

Assume we have some sort of distance function on the underlying space and we can prove that

. Then, it turns out that the mixing time

, i.e. the time required to get within

of the stationary distribution, is upper bounded as

Initially, the two particles can be apart, but the expected distance is exponentially shrinking as we run the coupling, so the mixing time is logarithmic in the diameter.

The distance between probability distributions is defined as follows. Let and

be two probability distributions on

. Then, the metric is:3

In this expression, and

denote the first and second marginals of

respectively. The minimum is taken over all couplings of

and

. This is the correct way to measure the distance between distributions. To give some intuition for this quantity, the quantity on the right represents the best test to distinguish the two distributions. If

and

are the same, we can take a coupling in which

and

are always identical. If

and

have disjoint supports, then no matter what coupling we use,

and

will never be equal.

It suffices to consider when and

are neighbors, i.e. at distance

apart. This is because if we have

and

far apart, then we could look at the path between them and reduce to the case when they are neighbors. Formally, this is known as path coupling. The formal statement is in Theorem 12.3 of [4]:

Theorem 3: Let be a connected weighted graph on the state space, where no edge has weight less than

. Let

be the length of the shortest path from

to

in

and let

be the diameter of

. Suppose there is a coupling such that for some

,

for all neighboring pairs ,

, i.e., those pairs connected by an edge in

. Then, the mixing time is bounded by

Glauber dynamics at high temperature

Recall that in Glauber dynamics, we pick a site randomly and then update the site conditioned on its neighbors. The first way we will couple together

and

is by picking the same site for both of them.

- Pick a random

.

- If

, then set

(if the neighborhoods of the two points agree, then update them the same way). Otherwise, update them using the best possible coupling, i.e., pick a coupling for

which minimizes

.

So if , then the points will never drift apart. The reason why analyzing this coupling is non-trivial is because there is a chance that the distance between the two points can increase.

Assume that the degree of the graph is . Suppose that

, that is, there is a single

such that

. What will happen to

and

? We start by picking a random

. There are three cases:

(with probability

): Nothing changes;

and

agree at

, and

and

will also agree at

. The distance remains at

.

(with probability

): We picked the one spot in which the two configurations differ. The neighborhoods of

are the same for

and

, so we update in the same way for both processes, and the distance drops to

.

(with probability

): We could have different updates. Here, we have to use the high temperature assumption, which says that if we change one bit, the probability of a configuration cannot change too much.In the Ising model,

. Changing

can bias the energy by at most

, so the expected distance afterwards is

.

Adding these cases up to get the overall expected distance gives

for large enough, so the expected distance will shrink. This argument also tells us how large the temperature must be, which is important for applications. This gives us

Notice that this is the same dependence as the coupon collector problem. Therefore, in the high temperature regime, the system behaves qualitatively as if there are no correlations.

Temporal and spatial mixing equivalence

The analysis of Glauber dynamics at high temperature is already a version of the equivalence between mixing in time and mixing in space. It says that if the correlations even with the immediate neighbors of a node are weak, then Glauber dynamics rapidly mixes.

Now, we want to consider the situation in which there can be strong correlations between immediate neighbors, but weak correlation with far away sites. We want to show that spatial mixing implies temporal mixing.

We will give a few definitions of correlation decay. (Note: The definitions of correlation decay below are not exactly the ones from Aram’s lecture. These definitions are from [5] and [6].)

For non-empty and

, let

be the distribution of the spins in

conditional on the spins in

being fixed to

. For

, let

be the marginal of

on the spins in

. We will assume that the interactions between the spins have finite range

, and

denotes the

-boundary of

, i.e.,

.

- (Weak decay of correlations) Weak spatial mixing holds for

if there exist constants

such that for any subset

,

- (Strong decay of correlations) Strong spatial mixing holds for

if there exist constants

such that for every

and every

differing only at site

,

- (Strong decay of correlations) Strong spatial mixing in the truncated sense holds for

if there exist

such that for all functions

which depend only on the sites at

and

respectively and such that

,

Here, is the correlation length (in physics, it is the characteristic length scale of a system). In the disordered phase, the correlation length is a constant independent of system size. For our purposes, the main consequence of these definitions is that the effective interaction range of each spin is

. For the Ising model, there is a key simplification due to monotonicity. Namely, the ferromagnetic Ising model has the nice property (which is not true for other models) that if we flip a sign from

to

, this only makes

more likely everywhere. This is because the spins want to agree. There are a lot of boundary conditions to consider, but here, due to monotonicity, we only need to consider two: all of the spins are

, and all of the spins are

. All

spins will give the highest probability of a

spin, and all

spin will give the lowest probability of a

spin. This monotonicity property is generally not required for time-space mixing equivalence to hold, but it greatly simplifies proofs.

It is a very non-obvious fact that all of these notions of spatial mixing are equivalent. We will sketch a proof that strong correlation decay implies that .

The idea is to use another coupling argument. Let differ in one coordinate, i.e.,

and

for

. We want to argue that the expected distance between the processes will decrease. The proof uses a generalization of Glauber dynamics called block Glauber dynamics. In Glauber dynamics, we take a single spin and resample it conditioned on its neighbors. In block Glauber dynamics, we take an

box and resample it conditioned on its neighbors. There is an argument, called canonical paths, which can be used to show that if block Glauber dynamics mixes, then regular Glauber dynamics also mixes (slightly more slowly; we lose a

factor, but anyway

will be a large constant) so analyzing block Glauber dynamics is fine.

If lies in the box, then the expected change in distance is

. If

is far away from the box, then there is no change. If

is in the boundary of the box, then it is possible for the distance to increase. However, strong spatial mixing allows us to control the influence of a single site, so the expected change in distance is bounded by

. Now, since

is a constant, if we choose

sufficiently large, then we will have the same situation as in the high temperature case: the expected distance will exponentially shrink over time.

Quantum systems

The quantum version of Markov chains has many more difficulties. The first difficulty is that the Hammersley-Clifford theorem (which we have been relying on throughout this blog post) fails.

Notation

To properly discuss what we mean, let’s set up some notation. Readers already familiar with density matrices, quantum entropy, and quantum mutual information may wish to skip to the next subsection. Most of the time we discuss quantum objects here, we’ll be using density matricies, often denoted . A density matrix can be thought of as an extension to regular quantum states

, where there is some classical source of uncertainty.

A density matrix is a positive semidefinite matrix with trace . This extends the notion of a classical probability distribution; in the quantum setting, a classical probability distribution

(thought of as a vector whose entries sum to

) is represented as the density matrix

.

For example, we can consider a situation in which there is a probability that we started with the quantum state

and a

probability that we started with the quantum state

. This would be denoted as follows:

Density matricies are generally useful for a lot of tasks, but for our purposes a density matrix will be used to discuss both the classical and quantum “uncertainty” we have about what state we have.

Now let’s also talk about a second important piece of notation: the tensor product. Often when discussing quantum states, it is important to discuss multiple quantum states simultaneously. For example, Alice has one system and Bob has another system

. However, these systems might be entangled, meaning that the results of the two systems are correlated.

For instance, let us consider the following state:

This particular state has the property that Alice and Bob will always both measure or they will both measure

. The notation for tensors is often ambiguous in the literature as there are many ways of specifying tensors. For instance, above we used subscripts to explicitly denote which particle was in system

and which was in system

. One may also choose to simply use the index of the system as below. The symbol

is used to denote a tensor between states (where it is assumed that the first state is system

and the second, system

). Gradually folks may shorten the notation as follows:

These are all notations for the same state. Let’s now talk about this state in the context of a density matrix. The density matrix of this state is as follows:

Writing the density matrix as

makes explicit that this is the density matrix over systems

and

.

A crucial operation that one will often perform using density matricies is the partial trace. The partial trace is a way of allowing us to consider only a smaller part of the larger part of the system, while taking into account the influence of the larger system around it.

Here’s an example: Suppose Bob wants to know what his state is. However, Bob really doesn’t care about Alice’s system and just wants to know what the density matrix for his system is. Bob’s density matrix is simply the following density matrix (a 50% chance of being in and a 50% chance of being in

).

More explicitly, we could write the following:

The partial trace is an operation that will let us take our original density matrix and generates a new density matrix

that ignores system

. This is specifically called the partial trace over

, or

.

So how do we do this? We simply sum over the state (effectively taking a trace, but only along one axis):

This is easier to evaluate using certain choices of notation:

This gives us the answer that we had expected.

We now have all of the tools we need to talk about quantum entropy. Intuitively, entropy can be thought of as the amount of uncertainty we have for our system, or equivalently the amount of information it takes to define our system. The entropy for a quantum system is defined as follows:

Note that here we use the shorthand to denote

. Here, writing

without the subscript indicates that this is the full or normal trace that one might expect (or equivalently performing the partial trace over all systems). We can now define the conditional entropy of a system as follows:

This definition intuitively makes sense since we can think of conditional entropy as the amount of information it takes to describe our joint system , given that we already know what

is.

We can now discuss quantum mutual information, the amount of information that measuring system will provide you about system

. Like the classical case, this is defined as follows:

We can now finally discuss quantum mutual information (QCMI), defined as follows: . With some algebraic simplifications, one can arrive at the expression:

The QCMI equals if and only if

is a quantum Markov state. Classically, the entropic characterization of conditional independence corresponds to an algebraic characterization.

Recovery Maps

Here, the algebraic characterization is more grueling. We have

Equivalently,

Here, is called the Petz recovery map,4

. One can think of a recovery may as a way that we can reconstruct the entire system

using just system

. It is not obvious that this is a quantum channel, but it is.

Suppose is a probability distribution, so

for some vector

. Then, all of the density matrices are diagonal and commuting. Then, the recovery map means that we divide by

and multiply by

, i.e., multiply by

. This is the natural thing to do if we lost our information about

and were trying to figure out what

was based on our knowledge of

. This is why

is known as a recovery map, and it is used to discuss conditional distributions in the quantum setting. In the classical case, if we start with

, look only at

, and use this to reconstruct

, then we would have the whole state in a Markov chain. That is why this is a plausible quantum version of being a Markov chain.

However, quantum Gibbs states are not, in general, quantum Markov chains. The failure of this statement to hold is related to topological order, which is similar to the degrees of freedom that show up in error correcting codes.

Quantum Markov Networks

Here, we will formally define a quantum Markov network. The reference for this is [7].

Let be a finite graph. We associate with each vertex

a Hilbert space

and we consider a density matrix

acting on

. Then,

is a quantum Markov network if for all

,

is conditionally independent of

given

, where the conditional independence statement is w.r.t.

and means that the corresponding QCMI satisfies

.

A quantum Markov network is called positive if has full rank. (Recall that in the statement of the Hammersley-Clifford Theorem, , it is assumed that the distribution is strictly positive.)

Now, consider the following example. First, we introduce the Pauli matrices

We define a Hamiltonian on three qubits ,

,

by

(Juxtaposition in the above expression signifies the tensor product as discussed before.) Finally, for , we define the Gibbs state

The Hamiltonian here has local terms which correspond to interactions ,

. However, it can be shown that the QCMI between

and

conditioned on

w.r.t.

is non-zero, which means that this is not a quantum Markov network w.r.t. the line graph

. This demonstrates the failure of the Hammersley-Clifford Theorem in the quantum setting.

Important Results

We will briefly discuss the results of two papers.

- [8] This paper shows that mixing in space implies mixing in time in the quantum case. However, the result of the paper only applies to commuting Hamiltonians. For commuting Hamiltonians, it turns out that quantum Gibbs states are quantum Markov networks. They use a version of Glauber dynamics, which can be simulated on a quantum computer but are also plausible dynamics for a physical system in nature. This is a difficult paper to read, but it is worth digesting if you want to work in the field.

- [9] This second paper is much easier and more general, covering non-commuting Hamiltonians, but it requires more conditions. They give a method of preparing the Gibbs state which can run on a quantum computer, but the dynamics are not plausible as a physical system because they are too complicated. The more complicated dynamics allows them to make the proof work. The paper also uses QCMI.They have two assumptions. The first assumption looks like mixing in space (weak correlation decay). The second assumption is that the state looks approximately like a quantum Markov network (this is definitely not met in general). A very important paper in this space is a recent breakthrough ([10]) which characterizes quantum Markov chains. They show that if the QCMI is bounded by

, then the recovery map

is

-close to

, i.e., low QCMI implies that the recovery map works well. This is trivial to prove classically, but very difficult in the quantum world.The algorithm in [9] is very elegant. Essentially, we take the entire system and punch out constant-sized boxes. If we can reconstruct the region outside of the boxes, then we can use the recovery maps to reconstruct the regions inside of the boxes, and the boxes are far apart enough so they are almost independent. For this argument, we must assume that the QCMI decays exponentially. Whenever we have exponential decay, we get a correlation decay that sets the size of the boxes. It is very difficult to condition on quantum states, but recovery maps provide a sense in which it is meaningful to do so. The paper gives an efficient method of preparing Gibbs states and simulating quantum systems on quantum computers.

Additional reading

The standard treatment of information theory is [11]. This book contains definitions and properties of entropy, conditional entropy, mutual information, and conditional mutual information.

To see a treatment of the subject of Markov chains from the perspective of probability theory, see [12] or the mathematically more sophisticated counterpart [13]. An introduction to coupling can be found in [14], as well as [4] (the latter also contains an exposition to spatial mixing). The connection between Markov chain mixing and the so-called logarithmic Sobolev inequality is described in [15].

Appendix: Intuition for Markov chains

Random walk on the cycle

We have points on the cycle,

. At each step, we move left or right with probability

. We can write the transition matrix as

where is the shift operator

. The matrix

is diagonalized by the Fourier transform. Define, for

,

We have the same amount of amplitude at every point, but there is a varying phase which depends on . If

, we get the all-ones vector. If

is small, then the phase is slowly varying. If

is large, then the phase is rapidly varying. Look at what happens after we apply the shift operator:

After the shift, we pick up an additional phase based on how rapidly the phase is varying. From this, we get:

The eigenvalues are

Only will give me an eigenvalue of

.

How do we analyze ? We should Fourier transform the distribution.

If is odd, then as

,

for all

, so

. Whatever you put into this operator, you get

out.

Spectral gap

The example of the random walk on the cycle shows that there is generally a unique stationary distribution and suggests that the speed of convergence is determined by how close the other eigenvalues are to . Specifically, suppose for simplicity that the eigenvalues of

are

(real and positive). Then, the convergence time is on the order of

.

Typically, the distance of the eigenvalues from reflects the size of the physical system. Even from the simple example, we can get some physical intuition from this. If

is small, then the spectral gap is

. Thus, the convergence time is

, which is indeed the convergence time for a random walk on a cycle.

References

- S. Gharibian, Y. Huang, Z. Landau, and S. W. Shin, “Quantum Hamiltonian complexity,” Found. Trends Theor. Comput. Sci., vol. 10, no. 3, pp. front matter, 159–282, 2014.

- R. W. Keener, Theoretical statistics. Springer, New York, 2010, p. xviii+538.

- E. Crosson, D. Bacon, and K. R. Brown, “Making Classical Ground State Spin Computing Fault-Tolerant,” Physical Review E, vol. 82, no. 3, Sep. 2010.

- C. Moore and S. Mertens, The nature of computation. Oxford University Press, Oxford, 2011, p. xviii+985.

- F. Martinelli, “Lectures on Glauber dynamics for discrete spin models,” in Lectures on probability theory and statistics (Saint-Flour, 1997), vol. 1717, Springer, Berlin, 1999, pp. 93–191.

- F. Martinelli and E. Olivieri, “Finite volume mixing conditions for lattice spin systems and exponential approach to equilibrium of Glauber dynamics,” in Cellular automata and cooperative systems (Les Houches, 1992), vol. 396, Kluwer Acad. Publ., Dordrecht, 1993, pp. 473–490.

- M. S. Leifer and D. Poulin, “Quantum graphical models and belief propagation,” Ann. Physics, vol. 323, no. 8, pp. 1899–1946, 2008.

- M. J. Kastoryano and F. G. S. L. Brandão, “Quantum Gibbs samplers: the commuting case,” Comm. Math. Phys., vol. 344, no. 3, pp. 915–957, 2016.

- F. G. S. L. Brandão and M. J. Kastoryano, “Finite correlation length implies efficient preparation of quantum thermal states,” ArXiv e-prints, Sep. 2016.

- O. Fawzi and R. Renner, “Quantum conditional mutual information and approximate Markov chains,” Comm. Math. Phys., vol. 340, no. 2, pp. 575–611, 2015.

- T. M. Cover and J. A. Thomas, Elements of information theory, Second. Wiley-Interscience [John Wiley & Sons], Hoboken, NJ, 2006, p. xxiv+748.

- R. Durrett, Essentials of stochastic processes. Springer, Cham, 2016, p. ix+275.

- R. Durrett, Probability: theory and examples, Fourth., vol. 31. Cambridge University Press, Cambridge, 2010, p. x+428.

- M. Mitzenmacher and E. Upfal, Probability and computing, Second. Cambridge University Press, Cambridge, 2017, p. xx+467.

- F. Cesi, “Quasi-factorization of the entropy and logarithmic Sobolev inequalities for Gibbs random fields,” Probab. Theory Related Fields, vol. 120, no. 4, pp. 569–584, 2001.

- This is the opposite of the probabilists’ convention, i.e., the transition probability matrix that we define here is the transpose of the one usually found in most probability theory textbooks. ↩

- As a side note, it may be a good research question to investigate to what extent quantum algorithms can be used to compute summations whose terms are possibly negative. In quantum Monte Carlo, the quantum Hamiltonian is converted to a classical energy function; this conversion always works, but sometimes you end up with complex energies, which is terrible for estimating the partition function because terms can cancel each other out. ↩

- You may recognize this as the total variation norm. ↩

- Petz wrote about quantum relative entropy in 1991, way before it was cool. ↩