This fall, I am once again teaching Harvard’s “Introduction to Theoretical Computer Science” course (CS 121). Like many “intro to TCS / intro to theory of computation” courses, Harvard’s course used to be taught with Sipser’s classic textbook. Sipser’s book is indeed, for better or worse, a classic. It is extremely well-written and students like it very much. It has clear explanations, plenty of examples and solved exercises, and a wealth of material on the web accumulated through decades of it being used in many courses. On the other hand, CS in general and theoretical CS in particular has changed a lot in the 25+ years since the book was written. In fact, the basic approach of starting with finite automata as the initial model of computation dates to the 1969 book of Hopcroft and Ullman.

One of my main goals in revising the theoretical CS course is to give students both rigorous foundations as well as a taste of modern topics. Some of these modern topics:

- Cryptography: a topic that combines mathematical beauty, practical importance, and a demonstration that sometimes computational hardness can be a resource rather than a hindrance.

- Quantum computing: a topic that shows the interaction between TCS and physics, the fundamental nature of the “Church Turing hypothesis”, and how we can (as in crypto) take a “lemon” (inability of classical computers to simulate certain quantum processes) and use it to make “lemonade” (a computer with stronger power than classical computers).

- Randomized computation and derandomization: Randomization is now essential to so many areas of CS, and so it is important to both demonstrate its power, and also how we might use complexity to remove it.

- Machine learning and average-case complexity: Traditionally in an intro TCS course the focus is purely on worst-case complexity. This leads to a disconnect with modern applications of CS, and in particular machine learning.

So, I ended up writing my own text – Introduction to Theoretical Computer Science. While at some point I hope to make it into a printed book, it will always be available freely online on https://introtcs.org/. The markdown source for it is available on the repository https://github.com/boazbk/tcs . I’ve benefitted greatly from feedback from both students and readers around the globe: at the time of writing, the project has 330 issues and 385 pull requests.

A central difference between the approach I take and the one of previous courses is that I start from Boolean circuits as the first model of computation. Boolean circuits are crucial to teach the topics above:

- Cryptography is much more natural with circuits rather than Turing machines as the model of computation. Statements such as “128 bits of security” make no sense in the asymptotic Turing machine formalism, but can be made precise with circuits.

- The standard model for quantum computing is quantum circuits.

- Derandomization is best described using the circuit model, and of course many results such as BPP in the Polynomial-Hierarchy are best shown using circuits and the class P/poly as an intermediate concept.

- Circuits are a very natural fit for machine learning, and in particular Neural Networks are just a special type of circuit.

Finally, while circuits are often considered an “advanced” topic, they have some advantages over automata as the initial model of computation:

- Finite is always easier than infinite: Starting with circuits enables us to start the course talking about arguably the simplest object: finite functions. Writing down the truth table of a finite function, and showing that there is more than one circuit to compute the same function, also helps clarify the difference between specification of a function and its implementation by some algorithm, which is distinction that many students grapple with.

- Circuits are connected to actual hardware. An intro to TCS course is not a pure math course – we want to convey to students that are models are motivated by actual computing. Circuits make this connection much closer, and less artificial than automata or even Turing machines.

- Can show cool theorems early. If we start with automata, then the first theorems we show can often seem not well motivated to students. It takes some time to build the machinery to show the main theorem – equivalence of automata and regular expressions – and the proof of that theorem is rather technical. In contrast, with circuits we can show three important theorems rather quickly: (1) every finite function

can be computed by some circuit of at most

size, (2) every circuit of size

can be represented as a labeled graph and hence (using adjacency list) by a string of size

, and (3) using (2) and the fact that there are

functions mapping

to

, there exist some function

that requires a circuit of

gates.

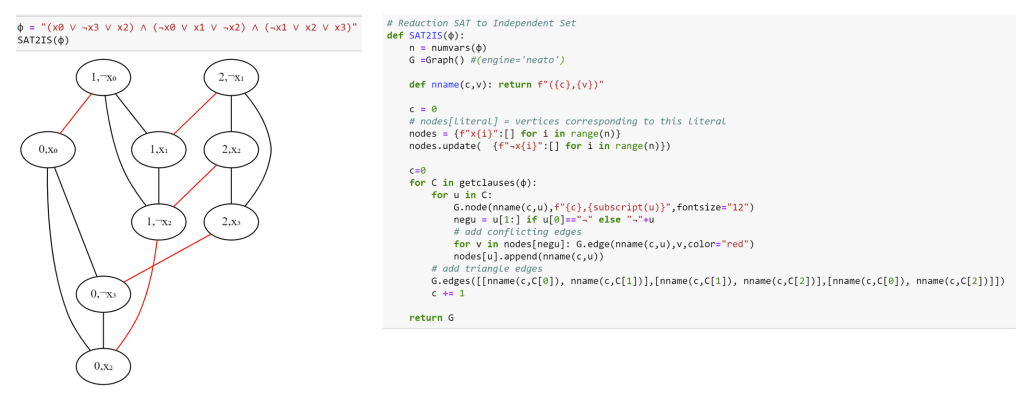

While the course is a theory course, and not about programming, one of my goals in the book and course was to connect it to programming. This is not just to motivate students and make them feel that the material is “practical” but also to better understand the theory itself. Notions such as NP-completeness reductions can be often confusing to students (which is why they get the direction wrong half the time). Implementing a reduction from 3SAT to independent set and seeing the end result make it much more concrete.

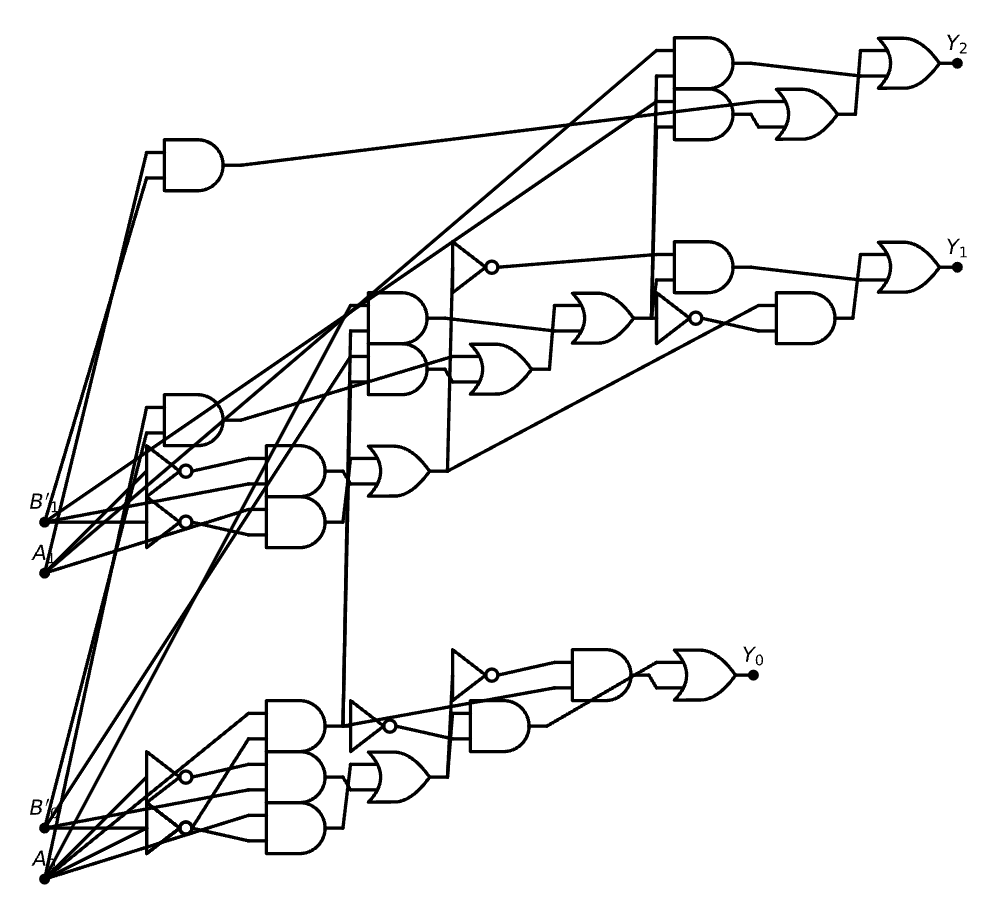

One way in which I wanted to use programming is to demonstrate to students how we can take a piece of Python code such as the code for adding two numbers given in their binary representation:

def add(A,B):

"""Add two binary numbers, given as lists of bits"""

Y = []

carry = zero(A[0]) # initialize carry to 0

for i in range(len(A)): # compute i-th digit of output

y = xor(A[i],B[i],carry) # xor function

carry = maj(A[i],B[i],carry) # majority function

Y.append(y)

Y.append(carry)

return Y

And obtain the corresponding circuit:

The code also uses the following one-linear helper functions

def maj(a,b,c): return (a & b) | (b&c) | (a&c)

def zero(a): return a & ~a

def xor2(a,b): return (a & ~b) | (~a & b)

def xor(*L): return xor2(*L) if len(L)==2 else xor2(xor(*L[:-1]),L[-1])

If you think about it, the task corresponds to extracting the computational graph of a piece of Python code. This is precisely the same task that auto-differentiation packages such as Pytorch need to do. Hence it can be solved in a similar way. Thus, inspired by Karpathy’s micro-grad package (see my back-propagation tutorial) and using the awesome SchemDraw package, I wrote a short colab notebook that does precisely that.

Specifically, the notebook defines a Bit class that (as its name suggests) stores a single bit. The class defines the logical AND, OR and NOT operations ( &, | , ~ in Python). If a and b are two bits then c = a & b not just contains the value which is the AND of the values of a and b, but also pointers to a and b and remembers how it was computed from them. This allows us to obtain from c a formula/circuit expressing it in terms of a and b.

class Bit:

counter = 0

def __init__(self,val=0, label="-"):

self.label = label

self.data = val

self.children = []

def op(self,f, label, *others):

inputs = [self.data] + [o.data for o in others]

out = Bit(f(*inputs),label)

out.children = [self] + list(others)

return out

def __and__(self,other): return self.op(lambda a,b: a & b, "\\wedge", other)

def __or__(self,other): return self.op(lambda a,b: a | b, "\\vee", other)

def __invert__(self): return self.op(lambda a: ~a, "\\neg")

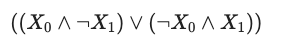

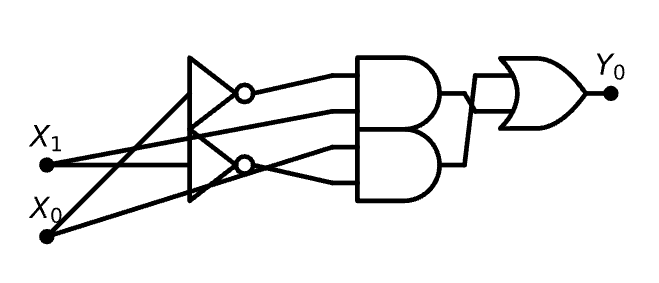

Now we can write a simple recursive function formula (see the notebook) to give out the latex of the formula corresponding to how a particular bit was computed. So if we write

from IPython.display import Markdown, display, Math

Y = xor(Bit(0,"X_0"), Bit(1,"X_1"))

Math(formula(Y))

Then we will get

The code of a recursive function that transforms this into the circuit

A = [Bit(0,f"A_{i}") for i in range(2)]

B = [Bit(0,f"B_{i}") for i in range(2)]

draw_circ(*add(A,B))

See the colab notebook for more.

Just out of curiosity, is Hopcroft and Ullman the first ever theoretical CS textbook?

No. For example Marvin Minsky: Computation: Finite and Infinite Machines was published in 1967.

It is a wonderful book. It has one of the nicest expositions of Turing’s undecidability (qutoing extensively from the original paper), builds finite automata from circuits, builds McCullogh “neurons” from circuits. Unfortunately it is quite dated, and spends a lot of time on topics like the minimal

universal Turing machine under the measure (number of states)x(number of alphabet symbols) that are not in the “theoretical minimum”.

There were even earlier texts on “Switching Theory” and on automata — for example Hartmanis and Stearns: Algebraic Structure Theory of Sequential Machines or Z. Kohavi Analysis and Synthesis of Sequential Switching Circuits, 1963 (that eventually morphed into “Switching and Finite Automata Theory (with Jha as coauthor) 2010.

You’re teaching at Harvard? I’m sorry but those diagrams are pretty terrible. You should at least draw the circuit such that channels intersect perpendicularly with a bubble to indicate crossing over. Otherwise, the content here is a nice read.

If you want to write some code to make it the crossings nicer then I’ll happily use it 🙂

I am very happy to read this because in the Spring I will also teach Intro to TCS/algs and I was planning to start with decision trees. I think that students can relate to them much better through the 20 questions game and faulty coin finding scale puzzles. After decision trees, I was planning to do Turing machinces, but maybe doing circuits would be even better.

“Circuits are connected to actual hardware”… That is perfect because hardware is real. Physics is where logical comparisons happen and where information is stored.

All our ideas are true when they align with reality and fictional when they don’t.

The scientific process tests our ideas against reality.

This is also why Gödel’s Incompleteness problem exists. A logical system always has an external part. That part is reality, where the information is stored and processed.

Boolean circuits are indeed extremely simple and natural because they correspond directly to formulas in classical propositional logic. But the NAND-TMs you then use seem to be the complete opposite. They have very little to do with Boolean circuits, they are complex and unnatural. Arguably they are even more unnatural than Turing machines.

Wouldn’t it be a much better idea to generalize Boolean circuits by adding a clock signal, and based on that flip-flops, which can encode states, and based on those, loops? I think this approach is known in the literature as “sequential logic”.

I don’t really use “NAND Turing Machines” that much. I want to convey an important concept – that we should think of circuits and machines not just as physical objects, but also as data – simply strings of text. This duality between code and data is of course crucial to computer science.

So it is important for me to have both models that seem “physical” such as circuits or Turing Machines (Where here I use the standard Turing machines because they are standard in the literature) and models corresponding to programs where the object is simply a list of instructions. For the second I use straightline programs as the programming language model that correspond to circuits, and add loops and arrays to those to get a model that corresponds to turning machines.

Whether the underlying operations are NAND or AND/OR/NOT or anything else is not very important (and students learn that it’s not important through doing exercises showing equivalence). Also, very quickly we talk about Turing completeness and how all reasonable models are equivalent, and hence the details of the model do not really matter