See arxiv link for paper by Nikhil Vyas, Sham Kakade, and me.

Conditional generative models hold much promise for novel content creation. Whether it is generating a snippet of code, piece of text, or image, such models can potentially save substantial human effort and unlock new capabilities. But there is a fly in this ointment. These models are trained on vast quantities of data, much of which is copyrighted. Due to precedents such as Authors Guild vs Google, many legal scholars believe that training a machine-learning model on copyrighted material constitutes fair use. However, the legal permissibility of using the sampled outputs of such models could be a different matter.

This is not just a theoretical concern. Large models do memorize significant chunks of their training data. For example, if you feed the first sentence of Harry Potter and the Sorcerer’s Stone to GPT-3, it provides the remaining ones:

(To be fair to GPT-3, this text likely appears many times in its training set; deduplication can help with reducing memorization but is not a panacea.)

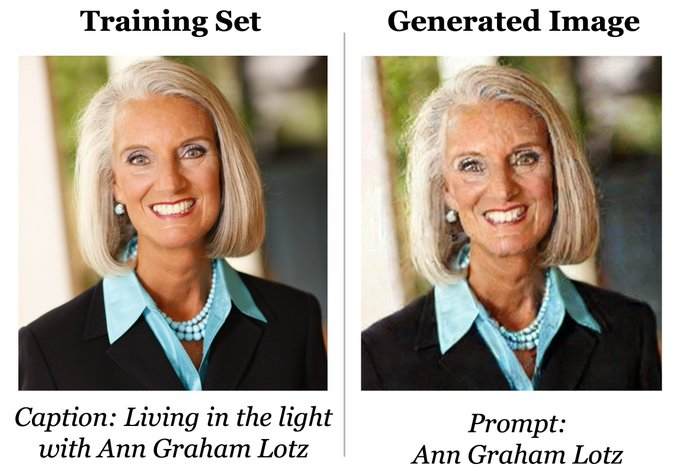

Similarly, as shown by Carlini et al, diffusion models can (and do) memorize images from their training set as well; see this figure from their paper:

Given the above, if you use a generated code in your program or a generated art in your design, how can you be sure it is not substantially similar to some copyrighted work from the training set, with all the legal and ethical implications this entails?

In a new paper, we (Nikhil, Sham, and Boaz) provide a formalism that enables rigorous guarantees on the similarity (and, more importantly, guarantees on the lack of similarity) between the output of a generative model and any potentially copyrighted data in its training set. Our work is not just theoretical: we give algorithms that can transform a training pipeline into one that satisfies our definition with minimal degradation in efficiency and quality of output. We demonstrate this on both language (transformer) and image (diffusion) models.

As noted in our paper, there are a number of ethical and legal issues in generative models. We should emphasize that our work focuses solely only on copyright infringements by the outputs of these models, and our concepts and tools do not address issues related to other forms of intellectual property, including privacy, trademarks, or fair use. Also, despite superficial similarities between the goals of privacy and copyright protection, these notions are distinct, and our work shows that solution concepts for the latter need not address the former. (See the paper for a detailed discussion of the differences between our definition and differential privacy.)

This post only provides an informal presentation of the concepts and tools formally defined in the paper. Please see the paper for full details.

The Technical Concept: Near Access-Freeness

Our definition is motivated by laws of the U.S. and many other countries to establish that copyright infringement has occurred. This requires:

- Access: To prove that a copyright infringement took place, the plaintiff needs to prove that “the defendant had access to the plaintiff’s copyrighted work.”

- Substantial similarity. The plaintiff also needs to prove there are “substantial similarities between the defendant’s work and original elements of the plaintiff’s work.” The Feist v. Rural U.S. Supreme Court Opinion states that this similarity must be the result of actual copying and not fortuitous similarity: In their words: “assume two poets, each ignorant of the other, compose identical poems … both are original and, hence, copyrightable.”

A natural candidate to capture the notion of access is to say that a generative model had access to some copyrighted piece of data

if

was included in

’s training set (our formalism permits other notions of access as well). Formally defining “substantial similarity” is arguably subtler. Simple measures such as Hamming distance or verbatim copying don’t cut it. For example, in Andy Warhol Foundations v. Goldsmith, a case currently before the supreme court, the question is whether Warhol’s transformations of Goldsmith’s photo of Prince constitute “fair use.”

Some of these transformations result in significant Hamming distance, though they can all be captured in only a few bits of information. Rather than wade into these issues, we use the fact that generative models are inherently probabilistic. Hence we can use distance measures between distributions that are information-theoretic and agnostic to superficial issues such as pixel-based representations. Our formalization is the following:

Definition 1 (Near Access Freeness – NAF): Let be a conditional generative model,

be a prompt. Suppose that for every copyrighted data

in the training set,

is a model that has not accessed

. We say that

is

– near access-free with respect to

and a function

, if

where

is a divergence measure such as the KL divergence or the Renyi divergence of order infinity.

This definition reduces the task of determining a copyright infringement to (1) a quantitative question of the acceptable value of , and (2) a qualitative question of providing a

function that appropriately satisfies a no access condition. Both can be application-dependent: the number of bits that constitute copyrightable content differs between, e.g., poems and images, and the

function could also differ based on application.

Definition 1 is stringent in the sense that it bounds (by ) the number of bits that could be “leaked” from

to the output of the generative model, no matter what transformation was used. Note that if a model was trained without access to

then we expect the likelihood of outputting a work similar to

should be extremely low, as this is a “monkeys on a typewriter” event. Furthermore, even if this event happened, then under copyright law, it would not be an infringement since (to quote Feist v. Rural) it would constitute “fortuitous similarity.”

Algorithms

Given the restrictive nature of the definition, one may be concerned that trying to achieve it would result in losing much of the utility of the original generative model. Fortunately, as our work shows, this is not the case. We provide several algorithms that can transform, in a black-box manner, any training pipeline for a generative model into one that produces models that have strong copyright protections under our definition. We now illustrate two of these:

Algorithm :

Input: A dataset , where some of the points

contain some copyrighted work. For such a copyrighted point

, we also denote it by

.

Learning: First de-deduplicate (resulting in a dataset with

points), and then split it into two disjoint shards,

and

. Then train two models

on

and

, respectively.

The Output Generative Model: Return the generative model as follows: On input a prompt

, generate

with probability proportional to

.

Note that for any copyrighted work one of either

and

would have been trained without access to

. The intuition of the algorithm is as follows: the output model

has the property that it tends to have probability mass only in the region where both

and

have probability mass; therefore, for any copyrighted work

, if

outputs

with reasonable probability then this should not be a violation since

tends to also be output by a model that was trained without access to the copyrighted work

itself. To formalize this, let us make the following choice for the

function: define

, for

s.t.

, i.e.

is the model trained without access to

. The paper formally shows that as long as the two models

and

have some non-trivial overlap (specifically their squared Hellinger distance is bounded away from 1), then the model

will satisfy our definition for some

(based on this Hellinger distance). In particular, for every copyrighted work

, the distribution

will have bounded KL divergence from the model

.

The intuition is provided in the following animation

Imagine that both and

are “faulty” in the sense that they have a significant chance of outputting a “memorized” sample from their training set (or an output substantially similar to it). The “faulty” regions are the “spikes” in their probability distribution, and, since the training sets are disjoint, these two “spikes” will be in different places. Hence when we switch to the probability distribution

it will have the effect of “flattening” the spikes and shifting most weight to the other parts of the probability distribution. Another alternative is for

to output the model

(this provides a guarantee in terms of the Max-KL divergence, and it replaces the assumption on overlap, defined with respect to the squared Hellinger distance, to be instead defined with respect to the total variation distance).

There a number of modifications to worth considering for more practical deployments. In some cases, directly computing the aforementioned probability distributions may be challenging. Furthermore, it may be desirable to utilize some arbitrary generative model

(say

was trained on the full dataset

) where we seek to modify

into a model that has strong protections against copyright violations (and which preserves the quality of

to the extent possible). Finally, it may be desirable to explicitly tune the acceptable value of

. Our next algorithm addresses these concerns and makes use of a tunable parameter

. It is specified as follows:

Algorithm : An Access-Free Reduction at Threshold

Input: A model ; A dataset

.

Learning: First de-deduplicate and split it into two disjoint shards,

and

. Then train two models

on

and

, respectively.

The Output Generative Model: Return the generative model defined as follows: On input a prompt

, first sample

and then accept

if

, for

. (Otherwise, resample.)

The intuition of is as follows: we first sample the output from

and only accept this output if the likelihood ratio to the

function (on any

) satisfies a desired upper bound, the latter of which can be verified by checking the likelihood ratio with respect to both

and

. Since

transforms the output of

(i.e. it throws aways probability mass which could be potential copyright violations), one might be concerned that we will degrade the quality of the original model

. Fortunately, we show when this is not the case. We give formal theoretical results on the effectiveness of the approach based on the information distances between

and

and

; in fact, we sometimes even improve on the quality of

with this approach. We also specify the relationship between the desired

and tunable parameter

.

An Illustrative Experiment

We now present a qualitative experiment demonstrating how applying our algorithm to memorizing models produces a model that no longer memorizes. Specifically, we first augment CIFAR-10 with multiple copies of two images (images close to the augmented images are marked with red boundaries); hypothetically, suppose these two images are copyrighted works. For illustrative purposes, we do not deduplicate our dataset. Note our goal here is not to simply present a heuristic approach, such as deduplication, that “often works in practice,” but it is to show that an algorithm with rigorous guarantees can also be practical.

The leftmost image shows generations from a model that was trained on the full dataset, where we clearly see that

generates the two copyrighted works. Our algorithm starts by splitting this dataset into two disjoint datasets, where the two copyrighted images are split into two different shards, and it trains two models

on these disjoint shards. The result is two models such that each generates the CIFAR-10 distribution, but also has a significant chance to output the memorized example. Yet when we combine all three of models using the CP-k algorithm, we obtain a model that agrees with them on the shared part of the distribution but is highly unlikely to output either one of the memorized images.

See the paper ( https://arxiv.org/abs/2302.10870 ) for the full details of our definitions, theorems, and experiments. We believe that there is much room for follow-up work, including optimization of performance, as well as much larger-scale experiments.