Guest post by Abhishek Anand and Noah Miller from the physics and computation seminar.

In 2013, Harlow and Hayden drew an unexpected connection between theoretical computer science and theoretical physics as they proposed a potential resolution to the famous black hole Firewall paradox using computational complexity arguments. This blog post attempts to lay out the Firewall paradox and other peculiar (at first) properties associated with black holes that make them such intriguing objects to study. This post is inspired by Scott Aaronson’s [1] and Daniel Harlow’s [2] excellent notes on the same topic. The notes accompanying this post provides a thorough and self-contained introduction to theoretical physics from a CS perspective. Furthermore, for a quick and intuitive summary of the Firewall paradox and it’s link to computational complexity, refer to this blog post by Professor Barak last summer.

Black holes and conservative radicalism

Black holes are fascinating objects. Very briefly, they are regions of spacetime where the matter-energy density is so high and hence, where the gravitational effects are so strong that no particle (not even light!) can escape from it. More specifically, we define a particular distance called the “Schwarzschild radius” and anything that enters within the Schwarzschild radius, (also known as the “event horizon,”) cannot ever escape from the black hole. General relativity predicts that this particle is bound to hit the “singularity,” where spacetime curvature becomes infinite. In the truest sense of the word, they represent the “edge cases” of our Universe. Hence, perhaps, it is fitting that physicists believe that through thought experiments at these edges cases, they can investigate the true behavior of the laws that govern our Universe.

Once you know that such an object exists, many questions arise: what would it look it from the outside? Could we already be within the event horizon of a future black hole? How much information does it store? Would something special be happening at the Schwarzschild radius? How would the singularity manifest physically?

The journey of trying to answer these questions can aptly be described by the term “radical conservatism.” This is a phrase that has become quite popular in the physics community. A “radical conservative” would be someone that tries to modify as few laws of physics as possible (that’s the conservative part) and through their dogmatic refusal to modify these laws and go wherever their reasoning leads (that’s the radical part) is able to derive amazing things. We radically use the given system of beliefs to lead to certain conclusions (sometimes paradoxes!) and then conservatively update the system of beliefs to resolve the created paradox and iterate. We shall go through a few such cycles and end at the Firewall paradox. Let’s begin with the first problem: how much information does a black hole store?

Entropy of a black hole

A black hole is a physical object. Hence, it could be able to store some information. But how much? In other words, what should the entropy of a black hole be? There are two simple ways of looking at this problem:

- 0: The no-hair theorem postulates that an outside observer can measure a small number of quantities which completely characterize the black hole. There’s the mass of the black hole, which is its most important quantity. Interestingly, if the star was spinning before it collapsed, the black hole will also have some angular momentum, and its equator will bulge out a bit. Hence, the black hole is also characterized by an angular momentum vector. Also, if the object had some net charge, the black hole would also have that net charge. This means that if two black holes were created due to a book and a pizza, respectively, with the same mass, charge and angular momentum, there would settle down to the “same” black hole with no observable difference. If an outside observer knows these quantities, they will now know everything about the black hole. So, in this view, we should expect for the entropy of a black hole to be 0.

- Unbounded: But maybe that’s not entirely fair. After all, the contents of the star should somehow be contained in the singularity, hidden behind the horizon. As we saw above, all of the specific details of the star from before the collapse do not have any effect on the properties of the resulting black hole. The only stuff that matters it the total mass, total angular momentum, and the total charge. That leaves an infinite number of possible objects that could all have produced the same black hole: a pizza or a book or a PlayStation and so on. So actually, perhaps, we should expect the entropy of a black hole to be unbounded.

The first answer troubled Jacob Bekenstein. He was a firm believer in the Second Law of Thermodynamics: the total entropy of an isolated system can never decrease over time. However, if the entropy of a black hole is 0, it provides with a way to reduce the entropy of any system: just dump objects with non-zero entropy into the black hole.

Bekenstein drew connections between the area of the black hole and its entropy. For example, the way in which a black hole’s area could only increase (according to classical general relativity) seemed reminiscent of entropy. Moreover, when two black holes merge, the area of the final black hole will always exceed the sum of the areas of the two original black holes This is surprising as for two spheres, the area/radius of the merged sphere, is always less than the sum of the areas/radii of two individual spheres:

Most things we’re used to, like a box of gas, have an entropy that scales linearly with its volume. However, black holes are not like most things. He predicted that entropy of a black hole should be proportional to its area, A and not its volume. We now believe that Bekenstein was right and it turns out that the entropy of the black hole can be written as:

where is Boltzmann constant and

is the Planck-length, a length scale where physicists believe quantum mechanics breaks down and a quantum theory of gravity will be required. Interestingly, it seems as though the entropy of the black hole is (one-fourth times) the number of Planck-length-sized squares it would take to tile the horizon area. (Perhaps, the microstates of the black hole are “stored” on the horizon?) Using “natural units” where we set all constants to 1, we can write this as

which is very pretty. Even though this number of not infinite, it is very large. Here are some numerical estimates from [2]. The entropy of the universe (minus all the black holes) mostly comes from cosmic microwave background radiation and is about in some units. Meanwhile, in the same units, the entropy of a solar mass black hole is

. The entropy of our sun, as it is now, is a much smaller

. The entropy of the supermassive black hole in the center of our galaxy is

, larger than the rest of the universe combined (minus black holes). The entropy of any of the largest known supermassive black holes would be

. Hence, there is a simple “argument” which suggests that black holes are the most efficient information storage devices in the universe: if you wanted to store a lot of information in a region smaller than a black hole horizon, it would probably have to be so dense that it would just be a black hole anyway.

However, this resolution to “maintain” the second law of thermodynamics leads to a radical conclusion: if a black hole has non-zero entropy, it must have a non-zero temperature and hence, must emit thermal radiation. This troubled Hawking.

Hawking radiation and black hole evaporation

Hawking did a semi-classical computation looking at energy fluctuations near the horizon and actually found that black holes do radiate! They emit energy in the form of very low-energy particles. This is a unique feature of what happens to black holes when you take quantum field theory into account and is very surprising. However, the Hawking radiation from any actually existing black hole is far too weak to have been detected experimentally.

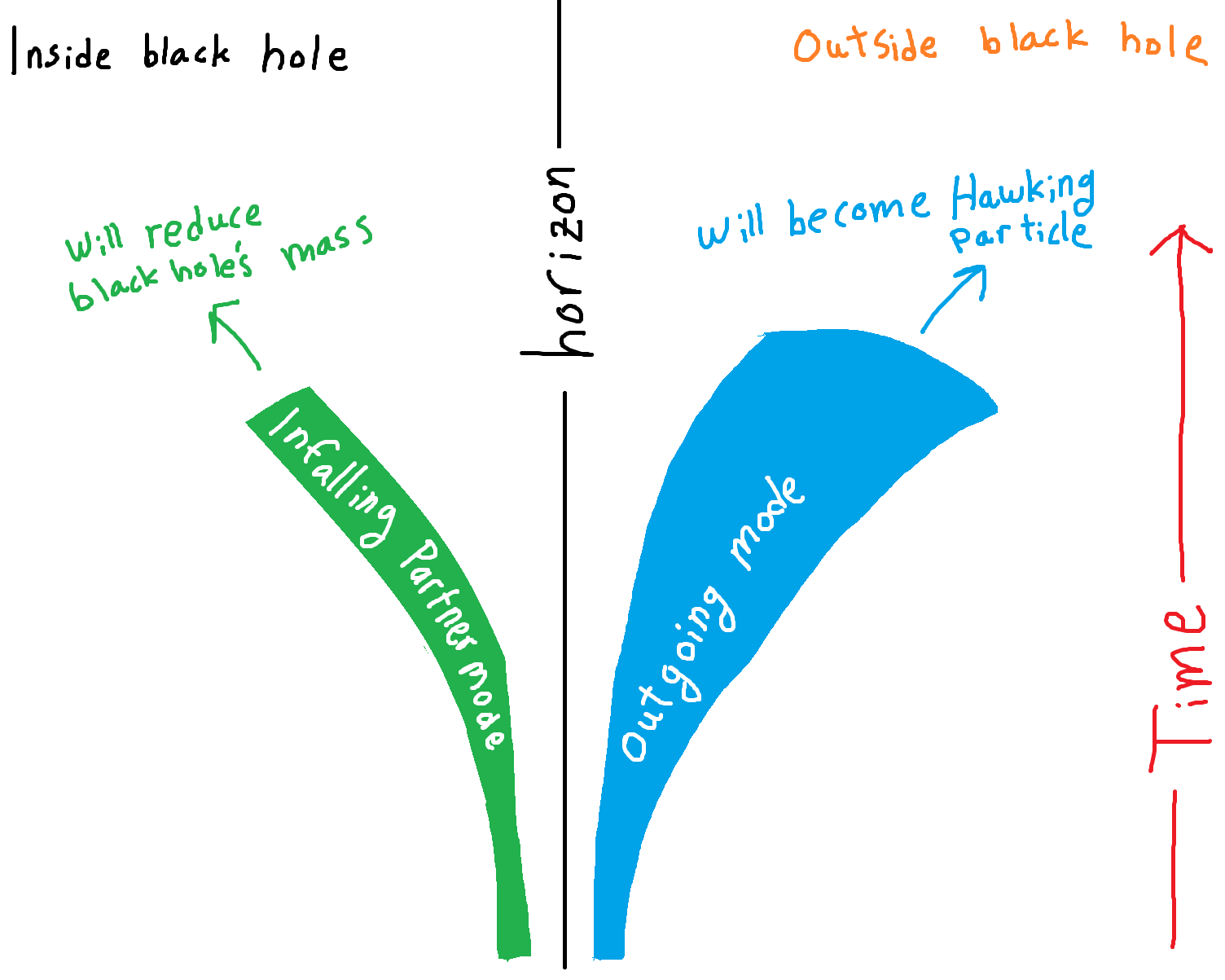

One simplified way to understand the Hawking radiation is by thinking about highly coupled modes (think “particles”) being formed continuously near the horizon. As this formation must conserve the conservation of energy, one of these particles has negative energy and one of the particles has the same energy but with a positive sign and hence, they are maximally entangled (if you know the energy of one of the particles, you know the energy of the other one): we will be referring to this as short-range entanglement. The one with negative energy falls into the black hole while the one with positive energy comes out as Hawking radiation. The maximally-entangled state of the modes looks like:

Here is a cartoon that represents the process:

A cartoon of the Hawking partner modes. The shaded region shows the width of the Gaussian wavepackets. The outgoing mode redshifts and spreads.

Because energetic particles are leaving the black hole and negative energy particles are adding to it, the black hole itself will actually shrink, which would never happen classically! And, eventually a black-hole will disappear. In fact, the time of evaporation of the black hole scales polynomially in the radius of the black hole, as . The black holes that we know about are simply too big and would be shrinking too slowly. A stellar-mass black hole would take

years to disappear from Hawking radiation.

However, the fact that black holes disappear does not play nicely with another core belief in physics: reversibility.

Unitary evolution and thermal radiation

A core tenet of quantum mechanics is unitary evolution: every operation that happens to a quantum state must be reversible (invertible). That is: if we know the final state and the set and order of operations performed, we should be able to invert the operations and get back the initial state. No information is lost. However, something weird happens with an evaporating black hole. First, let us quickly review pure and mixed quantum states. A pure state is a quantum state that can be described by a single ket vector while a mixed state represents a classical (probabilistic) mixture of pure states and can be expressed using density matrices. For example, in both, the pure state and mixed state

would one measure

half the time and

50% half the time. However, in the later one would not observe any quantum effects (think interference patterns of the double-slit experiment).

People outside of the black hole will not be able to measure the objects (quantum degrees of freedom) that are inside the black hole. They will only be able to perform measurements on a subset of the information: the one available outside of the event horizon. So, the state they would measure would be a mixed state. A simple example to explain what this means is that if the state of the particles near the horizon is:

tracing over the qubit A leaves us with the state and density matrix:

,

which is a classical mixed state (50% of times results in 1 and 50% of times results in 0). The non-diagonal entries of the density matrix encode the “quantum inference” of the quantum state. Here, are they are, in some sense we have lost the “quantum” aspect of the information.

In fact, Hawking went and traced over the field degrees of freedom that were hidden behind the event horizon, and found something surprising: the mixed state was thermal! It acted “as if” it is being emitted by some object with temperature “T” which does not depend on what formed the black hole and solely depends on the mass of the black hole. Now, we have the information paradox:

- Physics perspective: Now, once the black hole evaporates, we are left with this mixed thermal there is no way to precisely reconstruct the initial state that formed the black hole: the black hole has taken away information! Once the black hole is gone, the information of what went into the black hole is gone for good. Nobody living in the post-black-hole universe could figure out exactly what went into the black hole, even if they had full knowledge of the radiation. Another way to derive a contradiction is that the process of black hole evaporation when combined with the disappearance of the black hole, imply that a pure state has evolved into a mixed state, something which is impossible via unitary time evolution! Pure states only become mixed states whenever we decide to perform a partial trace; they never become mixed because of Schrodinger’s equation which governs the evolution of quantum states.

- CS perspective: We live in a world where only invertible functions are allowed. However, we are given this exotic function – the black hole – which seems to be a genuine random one-to-many function. There is no way to determine the input deterministically given the output of the function.

What gives? If the process of black hole evaporation is truly “non-unitary,” it would be a first for physics. We have no way to make sense of quantum mechanics without the assumption of unitary operations and reversibility; hence, it does not seem very conservative to get ride of it.

Physicists don’t know exactly how information is conserved, but they think that if they assume that it does, it will help them figure out something about quantum gravity. Most physicists believe that the process of black hole evaporation should indeed be unitary. The information of what went into the black hole is being released via the radiation in way too subtle for us to currently understand. What does this mean?

- Physics perspective: Somehow, after the black hole is gone, the final state we observe, after tracing over the degrees of freedom taken away by the black hole, is pure and encodes all information about what went inside the black hole. That is:

- CS perspective: Somehow, the exotic black hole function seems random but actually is pseudo-random as well as injective and given the output and enough time, we can decode it and determine the input (think hash functions!).

However, this causes yet another unwanted consequence: the violation of the no-cloning theorem!

Xeroxing problem and black hole complementarity

The no-cloning theorem simply states that an arbitrary quantum state cannot be copied. In other words, if you have one qubit representing some initial state, no matter what operations you do, you cannot end up with two qubits with the same state you started with. How do our assumptions violate this?

Say you are outside the black hole and send in a qubit with some information (input to the function). You collect the radiation corresponding to the qubit (output of the function) that came out. Now you decode this radiation (output) to determine the state of infalling matter (input). Aha! You have violated the no-cloning theorem as you have two copies of the same state: one inside and one outside the black hole.

So wait, again, what gives?

One possible resolution is to postulate that the inside of the black hole just does not exist. However, that doesn’t seem very conservative. According to Einstein’s theory of relativity, locally speaking, there is nothing particularly special about the horizon: hence, one should be able to cross the horizon and move towards the singularity peacefully.

The crucial observation is that for the person who jumped into the black hole, the outside universe may as well not exist; they can not escape. Extending this further, perhaps, somebody on the outside does not believe the interior of the black hole exists and somebody on the inside does not believe the exterior exists and they are both right. This hypothesis, formulated in the early 1990s, has been given the name of Black Hole Complementarity. The word “complementarity” comes from the fact that two observers give different yet complementary views of the world.

In this view, according to someone on the outside, instead of entering the black hole at some finite time, the infalling observer will instead be stopped at some region very close to the horizon, which is quite hot when you get up close. Then, the Hawking radiation coming off of the horizon will hit the observer on its way out, carrying the information about them which has been plastered on the horizon. So the outside observer, who is free to collect this radiation, should be able to reconstruct all the information about the person who went in. Of course, that person will have burned up near the horizon and will be dead.

And from the infalling observer’s perspective, however, they were able to pass peacefully through the black hole and sail on to the singularity. So from their perspective, they live, while from the outside it looks like they died. However, no contradiction can be reached, because nobody has access to both realities.

But why is that? Couldn’t the outside observer see the infalling observer die and then rocket themselves straight into the black hole themselves to meet the alive person once again before they hit the singularity, thus producing a contradiction?

The core idea is that it must take some time for the infalling observer to “thermalize” (equilibriate) on the horizon: enough time for the infalling observer to reach the singularity and hence become completely inaccessible. Calculations do show this to be true. In fact, we can already sense a taste of complexity theory even in this argument: we are assuming that some process is slower than some other process.

In summary, according to the BHC worldview, the information outside the horizon is redundant with the information inside the horizon.

But, in 2012, a new paradox, the Firewall paradox, was introduced by AMPS [3]. This paradox seems to be immune to BHC: the paradox exists even if we assume everything we have discussed till now. The physics principle we violate, in this case, is the monogamy of entanglement.

Monogamy of entanglement and Page time

Before we state the Firewall paradox, we must introduce two key concepts.

Monogamy of entanglement

Monogamy of entanglement is a statement about the maximum entanglement a particle can share with other particles. More precisely, if two particles A and B are maximally entangled with each other, they cannot be at all entanglement with a third particle C. Two maximally entangled particles have saturated both of their “entanglement quotas\”. In order for them to have correlations with other particles, they must decrease their entanglement with each other.

Monogamy of entanglement can be understood as a static version of the no-cloning theorem. Here is a short proof sketch of why polygamy of entanglement implies the violation of no-cloning theorem.

Let’s take a short detour to explain quantum teleportation:

Say you have three particles A, B, and C with A and B maximally entangled (Bell pair), and C is an arbitrary quantum state:

We can write their total state as:

Re-arranging and pairing A and C, the state simplifies to:

which means that if one does a Bell pair measurement on A and C, based on the measurement outcome, we know exactly which state B is projected to and by using rotations can make the state of B equal to the initial state of C. Hence, we teleported quantum information from C to B.

Now, assume that A was maximally entangled to both B and D. Then by doing the same procedure, we could teleport quantum information from C to both B and D and hence, violate the no-cloning theorem!

Page time

Named after Don Page, the “Page time” refers to the time when the black hole has emitted enough of its energy in the form of Hawking radiation that its entropy has (approximately) halved. Now the question is, what’s so special about the Page time?

First note that the rank of the density matrix is closely related to its purity (or mixedness). For example, a completely mixed state is the diagonal matrix:

which has maximal rank (). Furthermore, a completely pure state

can always be represented as (if we just change the basis and make the first column/row represent

):

which has rank 1.

Imagine we have watched a black hole form and begin emitting Hawking radiation. Say we start collecting this radiation. The density matrix of the radiation will have the form:

where is the total number of qubits in our initial state,

is the number of qubits outside (in form of radiation), and

is the probability of each state. We are simply tracing over the degrees of freedom inside the black hole (as there are

degrees inside the black hole, dimensionality of this space is

).

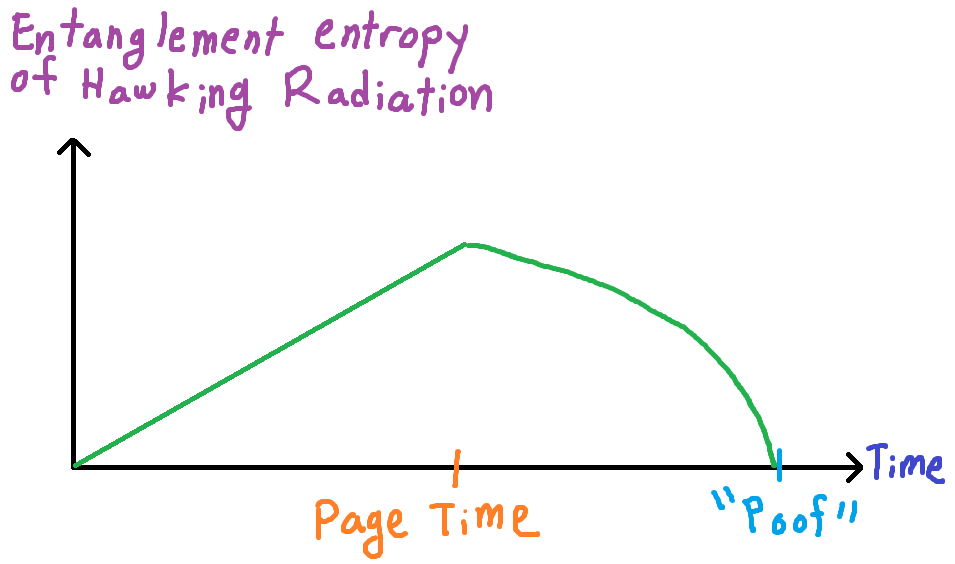

Don Page proposed the following graph of what he thought entanglement entropy of this density matrix should look like. It is fittingly called the “Page curve.”

- If

, rank(

) =

, as there are enough terms in the sum to get a maximally ranked matrix. And hence, we get maximally mixed states. In the beginning, the radiation we collect at early times will still remain heavily entangled with the degrees of freedom near the black hole, and as such the state will look mixed to us because we can not yet observe all the complicated entanglement. As more and more information leaves the black hole in the form of Hawking radiation, we are “tracing out” fewer and fewer of the near-horizon degrees of freedom. The dimension of our density matrix grows bigger and bigger, and because the outgoing radiation is still so entangled with the near-horizon degrees of freedom, the density matrix will still have off-diagonal terms which are essentially zero. Hence, the state entropy increases linearly.

- But if

, by the same argument, rank(

) =

. Hence, the density matrix becomes more and more pure. Once the black hole’s entropy has reduced by half, the dimension of the Hilbert space we are tracing out finally becomes smaller than the dimension of the Hilbert space we are not tracing out. The off-diagonal terms spring into our density matrix, growing in size and number as the black hole continues to shrink. Finally, once the black hole is gone, we can easily see that all the resulting radiation is in a pure state.

The entanglement entropy of the outgoing radiation finally starts decreasing, as we are finally able to start seeing entanglements between all this seemingly random radiation we have painstakingly collected. Some people like to say that if one could calculate the Page curve from first principles, the information paradox would be solved. Now we are ready to state the firewall paradox.

The Firewall Paradox

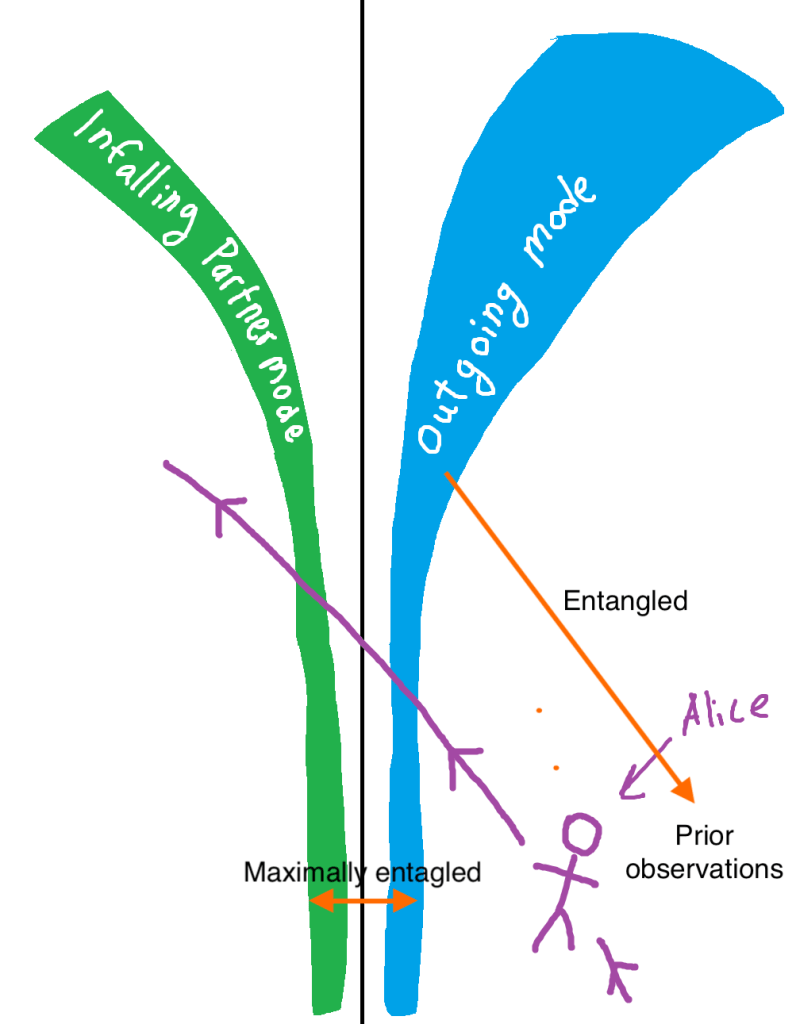

Say Alice collects all the Hawking radiation coming out of a black hole. At maybe, about times the Page time, Alice is now able to see significant entanglement in all the radiation she has collected. Alice then dives into the black hole and sees an outgoing Hawking mode escaping. Given the Page curve, we know that knowing this outgoing mode must decrease the entropy of our observed mixed state. In other words, it must make our observed density matrix purer. And hence, be entangled with the particles we have already collected.

(Another way to think about this: let’s say that a random quantum circuit at the horizon scrambles the information in a non-trivial yet injective way in order for radiation particles to encode the information regarding what went inside the black hole. The output qubits of the circuit must be highly entangled due to the random circuit.)

Alice diving into the black hole after the Page time to see the outgoing mode emerge.

However, given our discussion on Hawking radiation about short-range entanglement, the outgoing mode must be maximally entangled with an in-falling partner mode. This contradicts monogamy of entanglement! The outgoing mode cannot be entangled both with the radiation Alice has already collected and also maximally entangled with the nearby infalling mode!

So, to summarize, what did we do? We started with the existence of black holes and through our game of conservative radicalism, modified how physics works around them in order to make sure the following dear Physics principles are not violated by these special objects:

- Second Law of Thermodynamics

- Objects with entropy emit thermal radiation

- Unitary evolution and reversibility

- No-cloning theorem

- Monogamy of entanglement

And finally, ended with the Firewall paradox.

So, for the last time in this blog post, what gives?

- Firewall solution: The first solution to the paradox is the existence of a firewall at the horizon. The only way to not have the short-range entanglement discussed is if there is very high energy density at the horizon. However, this violates the “no-drama” theorem and Einstein’s equivalence principle of general relativity which states that locally there should be nothing special about the horizon. If firewalls did exist, an actual wall of fire could randomly appear out of nowhere in front of us right now if a future black hole would have its horizon near us. Hence, this solution is not very popular.

- Remnant solution: One possible resolution would be that the black hole never “poofs” but some quantum gravity effect we do not yet understand stabilizes it instead, allowing for some Planck-sized object to stick around? Such an object would be called a “remnant.” The so-called “remnant solution” to the information paradox is not a very popular one. People don’t like the idea of a very tiny, low-mass object holding an absurdly large amount of information.

- No unitary evolution: Perhaps, black holes are special objects which actually lose information! This would mean that black hole physics (the quantum theory of gravity) would be considerably different compared to quantum field theory.

- Computational complexity solution?: Can anyone ever observe this violation? And if not, does that resolve the paradox? This will be covered in our next blog post by Parth and Chi-Ning.

References

- Scott Aaronson. The complexity of quantum states and transformations: from quantum money to black holes.arXiv preprintarXiv:1607.05256, 2016.

- Daniel Harlow. Jerusalem lectures on black holes and quantum information. Reviews of Modern Physics, 88(1):015002, 2016.

- Ahmed Almheiri, Donald Marolf, Joseph Polchinski, and JamesSully. Black holes: complementarity or firewalls? Journal of HighEnergy Physics, 2013(2):62, 2013.

Is there some way, even in the remote future, even in principle, to verify some of this experimentally? I mean, with general relativity at least one has the measurement of light deflection by gravity and the perihelion of Mercury (and LIGO, but there are skeptcs). Can one envision some tests for these theories?