(This post from the lecture by Yueqi Sheng)

In this post, we will talk about detecting phase transitions using

Approximate-Message-Passing (AMP), which is an extension of

Belief-Propagation to “dense” models. We will also discuss the Replica

Symmetric trick, which is a heuristic method of analyzing phase

transitions. We focus on the Rademacher spiked Wigner model (defined

below), and show how both these methods yield the same phrase transition

in this setting.

The Rademacher spiked Wigner model (RSW) is the following. We are given

observations where

(sampled uniformly) is the true signal and

is a

Gaussian-Orthogonal-Ensemble (GOE) matrix:

for

and

. Here

is the signal to noise

ratio. The goal is to approximately recover .

The question here is: how small can be such that it is

impossible to recover anything reasonably correlated with the

ground-truth ? And what do the approximate-message-passing algorithm

(or the replica method) have to say about this?

To answer the first question, one can think of the task here is to

distinguish vs

. One approach to distinguishing these distributions is to

look at the spectrum of the observation matrix . (In fact, it turns

out that this is an asymptotically optimal distinguisher [1]). The spectrum of behaves as ([2]):

- When

, the empirical distribution of eigenvalues in

spiked model still follows the semicircle law, with the top

eigenvalues -

When

, we start to see an eigenvalue

in the

planted model.

Approximate message passing

This section approximately follows the exposition in [3].

First, note that in the Rademacher spiked Wigner model, the posterior

distribution of the signal conditioned on the observation

is: This

defines a graphical-model (or “factor-graph”), over which we can perform

Belief-Propogation to infer the posterior distribution of .

However, in this case the factor-graph is dense (the distribution is a

product of potentials for all

pairs of ).

In the previous blog post, we saw belief propagation works great when the underlying interaction

graph is sparse. Intuitively, this is because is locally tree like,

which allows us to assume each messages are independent random

variables. In dense model, this no longer holds. One can think of dense

model as each node receive a weak signal from all its neighbors.

In the dense model setting, a class of algorithms called Approximate

message passing (AMP) is proposed as an alternative of BP. We will

define AMP for RWM in terms of its state evolution.

State evolution of AMP for Rademacher spiked Wigner model

Recall that in BP, we wish to infer the posterior distributon of

, and the messages we pass between nodes correspond to marginal

probability distribution over values on nodes. In our setting, since the

distributions are over , we can represent distributions by

their expected values. Let denote the

message from to

at time

. That is,

corresponds

to the expected value .

To derive the BP update rules, we want to compute the expectation

of a node

, given the

messages for

. We can

do this using the posterior distribution of the RWM, ,

which we computed above.

And similarly for .

From the above, we can take expectations over , and express

in terms of

. Doing this (and

using the heuristic assumption that the distribution of is a

product distribution), we find that the BP state update can be written

as:

where the interaction matrix , and

.

Now, Taylor expanding around

, we find

since the terms are of order

.

At this point, we could try dropping the “non-backtracking” condition

from the above sum (since the node

contributes at most

to the sum anyway), to get the state update:

(note the messages no longer

depend on receiver – so we write in place of

).

However, this simplification turns out not to work for estimating the

signal. The problem is that the “backtracking” terms which we added

amplify over two iterations.

In AMP, we simply perform the above procedure, except we add a

correction term to account for the backtracking issue above. Given ,

for all , the AMP update is:

The correction term corresponds to error introduced by the backtracking

terms. Suppose everything is good until step . We will examine

the influence of backtracking term to a node through length 2 loops.

At time ,

exert

additional influence to

each of it’s neighbor . At time

,

receive roughly

. Since

has magnitude

and we need to sum over all of

’s neighbors,

this error term is to large to ignore. To characterize the exact form of

correction, we simply do a taylor expansion

State evolution of AMP

In this section we attempt to obtain the phase transition of Rademacher

spiked Wigner model via looking at .

We assume that each message could be written as a sum of signal term and

noise term. where

. To the dynamics of AMP (and find its phase

transition), we need to look at how the signal and noise

evolves with

.

We do the following simplification: ignore the correction term and

assume each time we obtain an independent noise .

Here, we see that

and .

Note that is essentially proportional to overlap between

ground truth and current belief, since the function keeps the

magnitude of the current beliefs bounded.

For the noise term, each coordinate of is a gaussian random

variable with mean and variance

It was shown in [4] that we can introduce a new

parameter s.t.

As , turns out

and

. To study the behavior of

as

, it is enough to track the evolution of

.

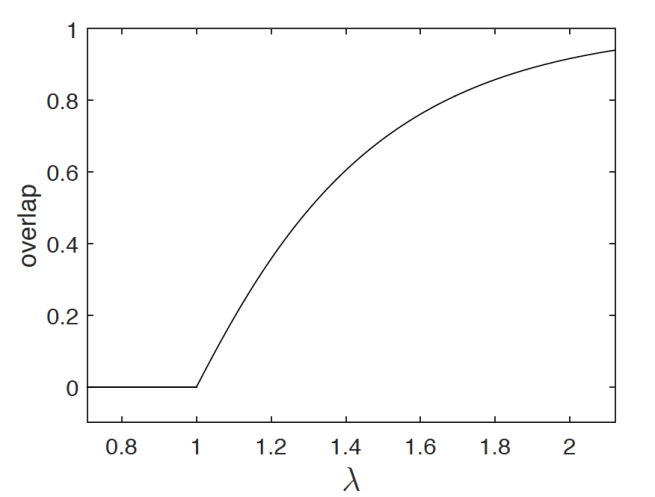

This heuristic analysis of AMP actually gives a phase transition at

(in fact, the analysis of AMP can be done rigorously as in [5]):

- For

: If

,

w.h.p., thus we have

. Taking

, we have

, which means there AMP solution has no overlap with the ground truth.

-

For

: In this case, AMP’s solution has some correlation with the ground truth.

(Figure from [6])

Replica symmetry trick

Another way of obtaining the phase transition is via a non-rigorous

analytic method called the replica method. Although non-rigorous, this

method from statistical physics has been used to predict the fixed point

of many message passing algorithms and has the advantage of being easy

to simulate. In our case, we will see that we obtain the same phase

transition temperature as AMP above. The method is non-rigorous due to

several assumptions made during the computation.

Outline of replica method

Recall that we are interested in minizing the free energy of a given

system where

is

the partition function as before:

and

.

In replica method, is not fixed but a random variable. The

assumption is that as , free energy doesn’t vary with

too much, so we will look at the mean of to approximate free

energy of the system.

is called the free energy density and the goal now is to

compute the free energy density as a function of only , the

temperature of the system.

The replica method is first proposed as a simplification of the

computation of

It is a generally hard problem to compute in a clear way. A

naive attempt of approximate is to simply pull the log out

Unfortunately and

are quite different quantities,

at least when temperature is low. Intuitively, is looking at

system with a fixed while in

,

and

are allowed to

fluctuate together. When the temperature is high, doesn’t play a big

roll in system thus they could be close. However, when temperature is

low, there could be a problems. Let ,

,

.

While is hard to compute,

is a much easier quantity. The

replica trick starts from rewriting with moments of

:

Recall that for

and

, using this we can rewrite

in the following

way:

Claim 1. Let

Then,

The idea of replica method is quite simple

- Define a function

for

s.t.

for all such

.

-

Extend

analytically to all

and take the limit of

.

The second step may sound crazy, but for some unexplained reason, it has

been surprisingly effective at making correct predictions.

The term replica comes from the way used to compute

in Claim 1. We expand the

-th moment

in terms of replicas of the system

For Rademacher spiked Wigner model

In this section, we will see how one can apply the replica trick to

obtain phase transition in the Rademacher spiked Wigner model. Recall

that given a hidden , the observable

where

and

.

We are interested in finding the smallest where we can still

recover a solution with some correlation to the ground truth . Note

that is not so important here as

doesn’t carry

any information in this case.

Given by the posterior , the system we

set up corresponding to Rademacher spiked Wigner model is the following:

- the system consists of

particles and the interactions between

each particle are give by -

the signal to noise ratio

as the inverse temperature

.

Following the steps above, we begin by computing

for : Denote

where

is the

th replica of the system.

We then simplify the above expression with a technical claim.

Claim 2. Let where

is a fixed matrix and

is the GOE matrix defined as above. Then,

for some constant depending on distribution of

.

Denote . Apply Claim 2 with

, we have

To understand the term inside exponent better, we can rewrite the inner

sum in terms of overlap between replicas:

where the last equality follows from rearranging and switch the inner

and outer summations.

Using a similar trick, we can view the other term as

Note that represents overlaps between the

and

th replicas and

represents the

overlaps between the th replica and the ground truth vector.

In the end, we get for any integer , (Equation 1):

Our goal becomes to approximate this quantity. Intuitively, if we think

of as indices on a

matrices,

,

with , then

is the average of

i.i.d matrices. So we

expect for

w.h.p. In the

remaining part, We find the correct via rewriting Equation 1.

Observe that by introducing a new variable for

and

using the property of gaussian intergal (Equation 4):

Replace each by a such integral, we

have (Equation 2):

where is the constant given by introducing gaussian intergals.

To compute the integral in (Equation 2), we need to cheat a little bit and take

before letting

. Note that free energy density

is defined as

This is the second assumption made in the replica method and it is

commonly believed that switching the order is okay here. Physically,

this is plausible because we believe intrinsic physical quantities

should not depend on the system size.

Now the Laplace method tells us when , the integral in (Equation 2) is dominated by the max of the exponent.

Theorem 1 (Laplace Method). Let , then

where and

is the Hessian of

evaluated at the point

.

Fix a pair of and apply Laplace method with

what’s left to do is to find the critical point of . Taking the

derivatives gives

where

.

We now need to find a saddle point of where the hessian is PSD. To

do that, we choose to assume the order of the replicas does not matter,

which is refer to as the replica symmetry case. 1 One simplest form

of is the following:

,

and

for some

. This also implies that

for some

and

Plug this back in to Equation 2 gives: (Equation 3)

To obtain , we only need to deal with the last term in

(Equation 3) as . Using the fact that

for all

and using the same trick of introducing new gaussain integral as

in (Equation 4) we have

Using the fact that we want the solution to minimizes free energy,

taking the derivative of the current w.r.t.

gives

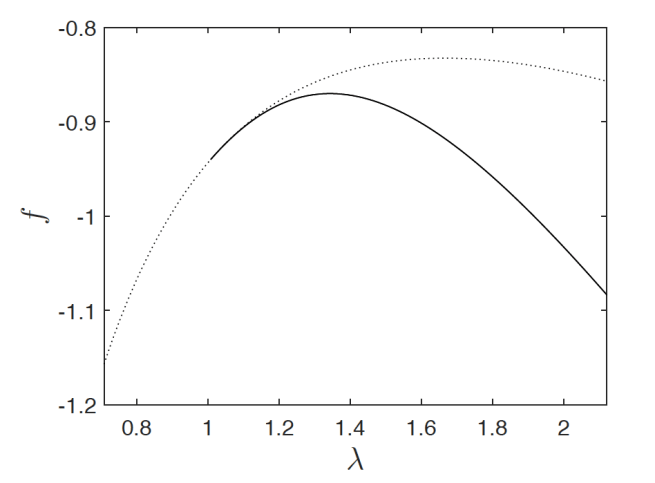

which matches the fixed point of AMP. Plug in and

will give us

. The curve of

looks like the Figure below, where

the solid line is the curve of with the given

and the

dotted line is the curve given by setting all variables .

References

-

Turns out for this problem, replica symmetry is the only case. We

will not talk about replica symmetry breaking here, which

intuitively means we partition replicas into groups and re-curse. ↩